One drawback AI customers typically face is the fixed want to change the chat interface.

ChatGPT may be good at many issues however Perplexity is healthier at looking out the net and answering your questions.

In reality, you might really feel like asking the identical query to a different AI mannequin if you’re not glad with the present AI’s reply.

However logging into one other AI after which copy pasting the identical questions is cumbersome process.

For this reason there are instruments that let you use multiple AI mannequin from a single interface. Nonetheless, most of such companies are paid.

And that is the place LibreChat comes into the image.

Let’s dive in and uncover how this game-changing platform can improve your digital expertise.

What’s LibreChat AI?

LibreChat AI is an open-source platform that permits customers to speak and work together with varied AI fashions by a unified interface. You should use OpenAI, Gemini, Anthropic and different AI fashions utilizing their API. You might also use Ollama as an endpoint and use LibreChat to work together with native LLMs. It may be put in regionally or deployed on a server.

LibreChat is designed to be extremely customizable and helps a variety of AI suppliers and companies. Let me summarize its predominant options:

Free and Open Supply: Accessible to everybody with none prices.Customization: Affords in depth choices to tailor the platform to particular person preferences.Multi-AI Help: Integrates with quite a few AI fashions and companies.Unified Interface: Gives a constant expertise for interacting with completely different AI fashions.

Putting in LibreChat

Getting LibreChat AI up and working is a simple course of, with two major strategies: NPM and Docker set up.

Whereas each choices supply benefits, Docker is my most popular alternative for its simplicity and effectivity. Nonetheless, we’ll discover each on this article.

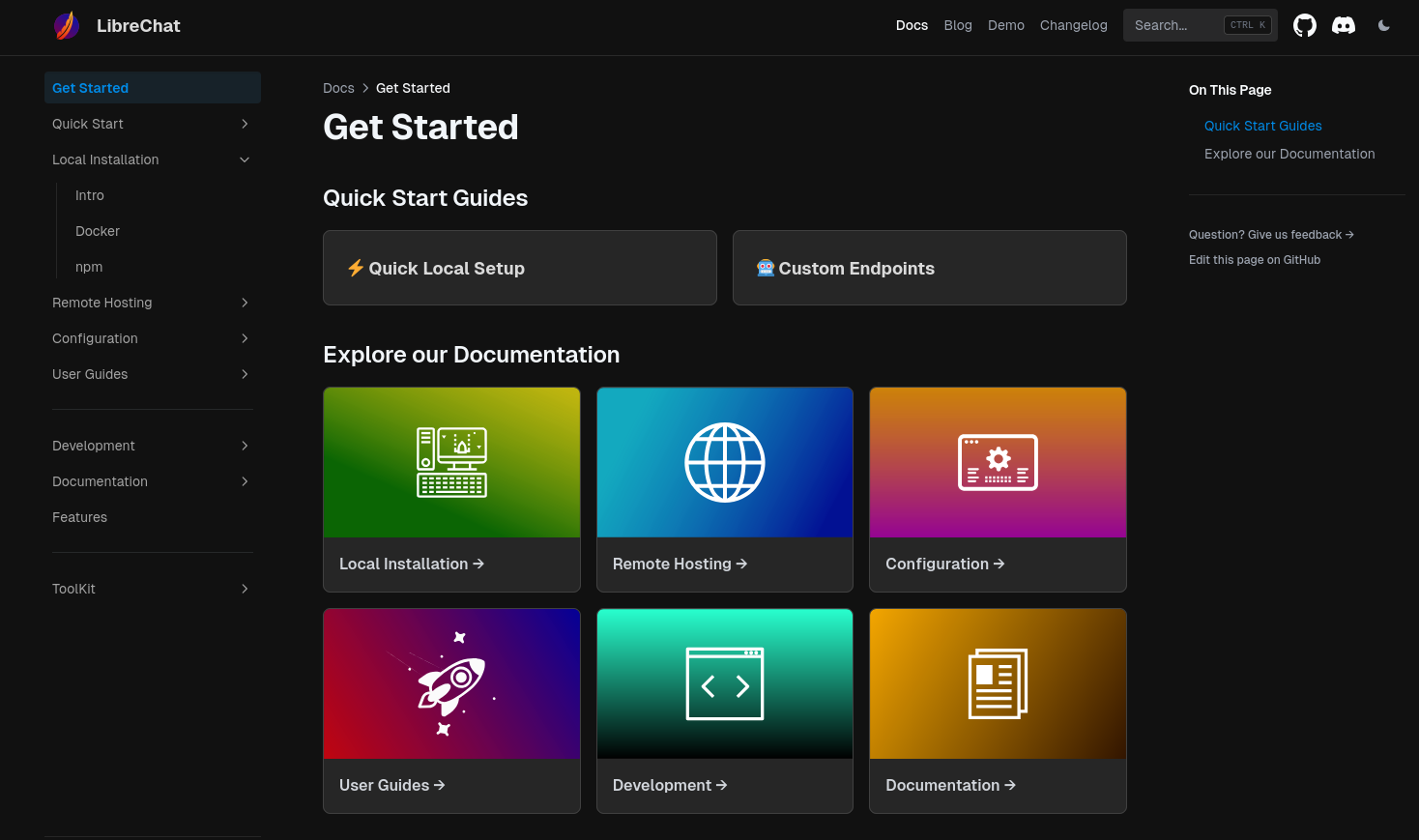

You can even consult with the official documentation for detailed set up directions on LibreChat. I have to say that they’ve accomplished an amazing job by offering a complete information overlaying each step of the method.

Technique 1: Set up LibreChat with NPM

Earlier than you start with the set up, just remember to have all of the conditions for our challenge:

Conditions:

As soon as they’re put in, you’ll be able to transfer ahead setting-up your openAI clone interface.

Making ready set up atmosphere

First, it is advisable to clone the official LibreChat repository because it accommodates all of the recordsdata it is advisable to construct LibreChat:

git clone https://github.com/danny-avila/LibreChat.git

Navigate to the cloned listing:

cd LibreChat

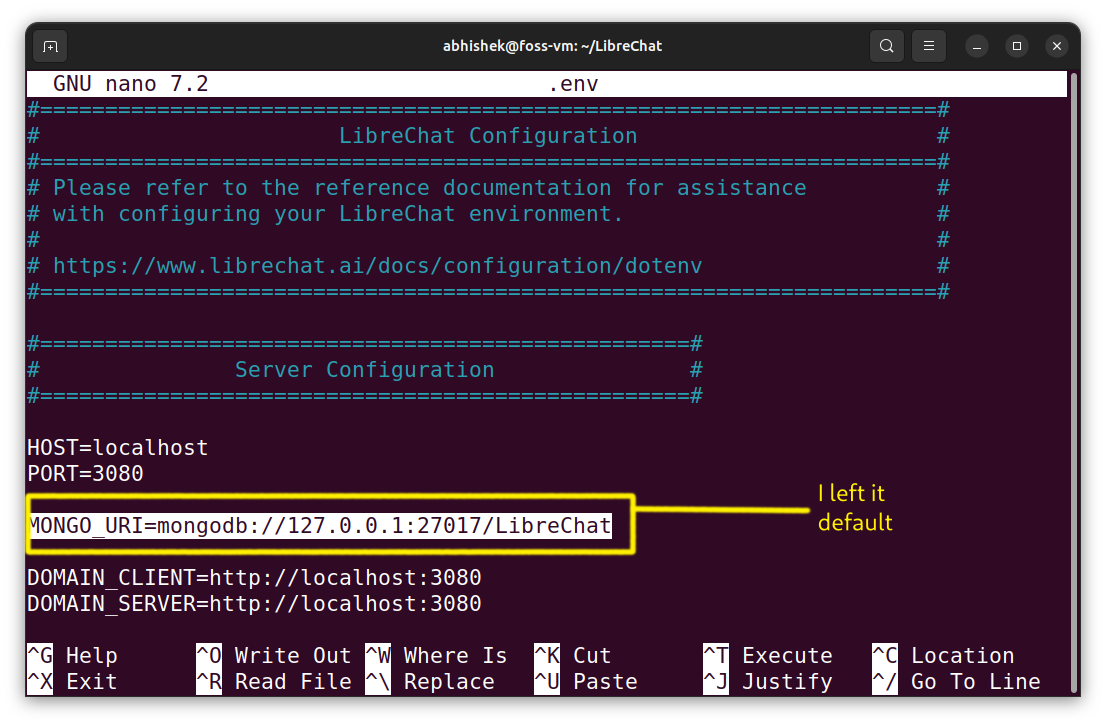

Now you create a .env file from the .env.instance

cp .env.instance .env

🚧

Edit the newly created .env file to replace the MONGO_URI with your personal

Constructing LibreChat

As soon as the preparation steps have completed, we are able to construct the challenge from the supply in a number of easy steps.

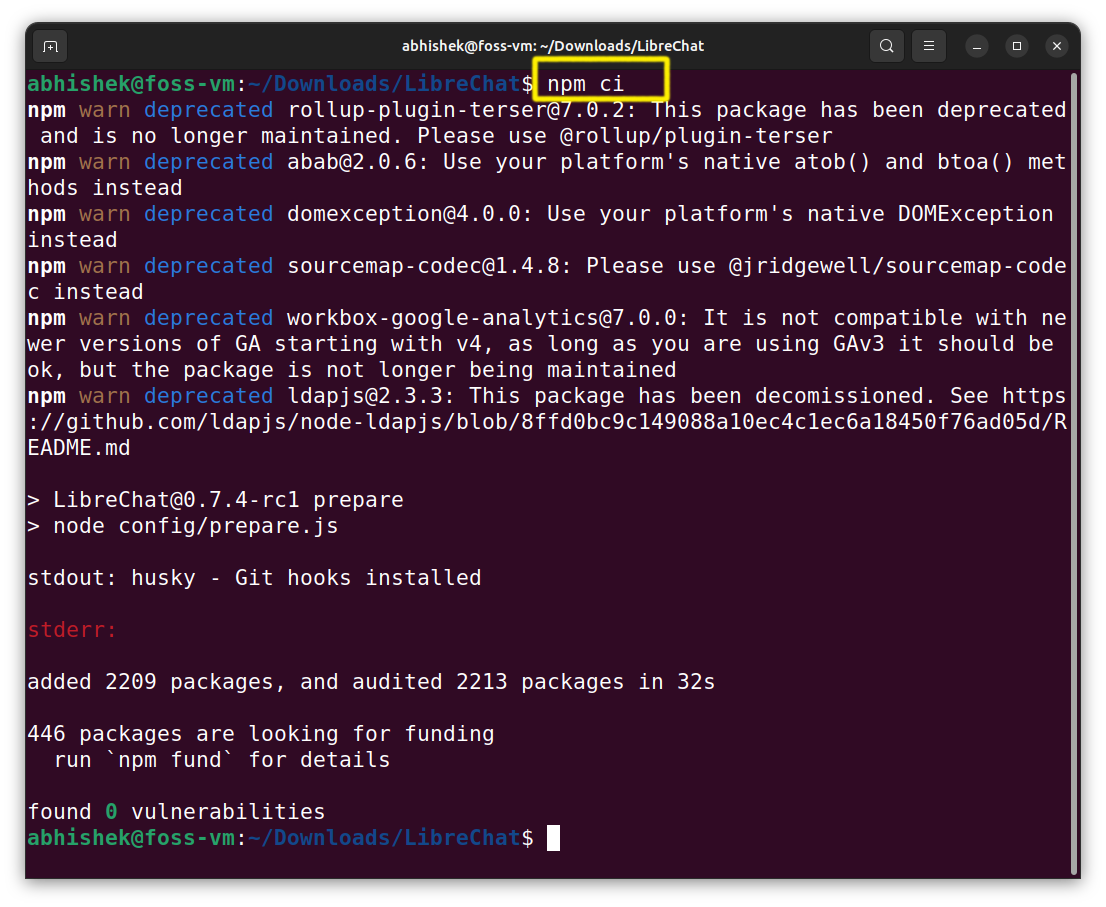

To put in the dependencies:

npm ci

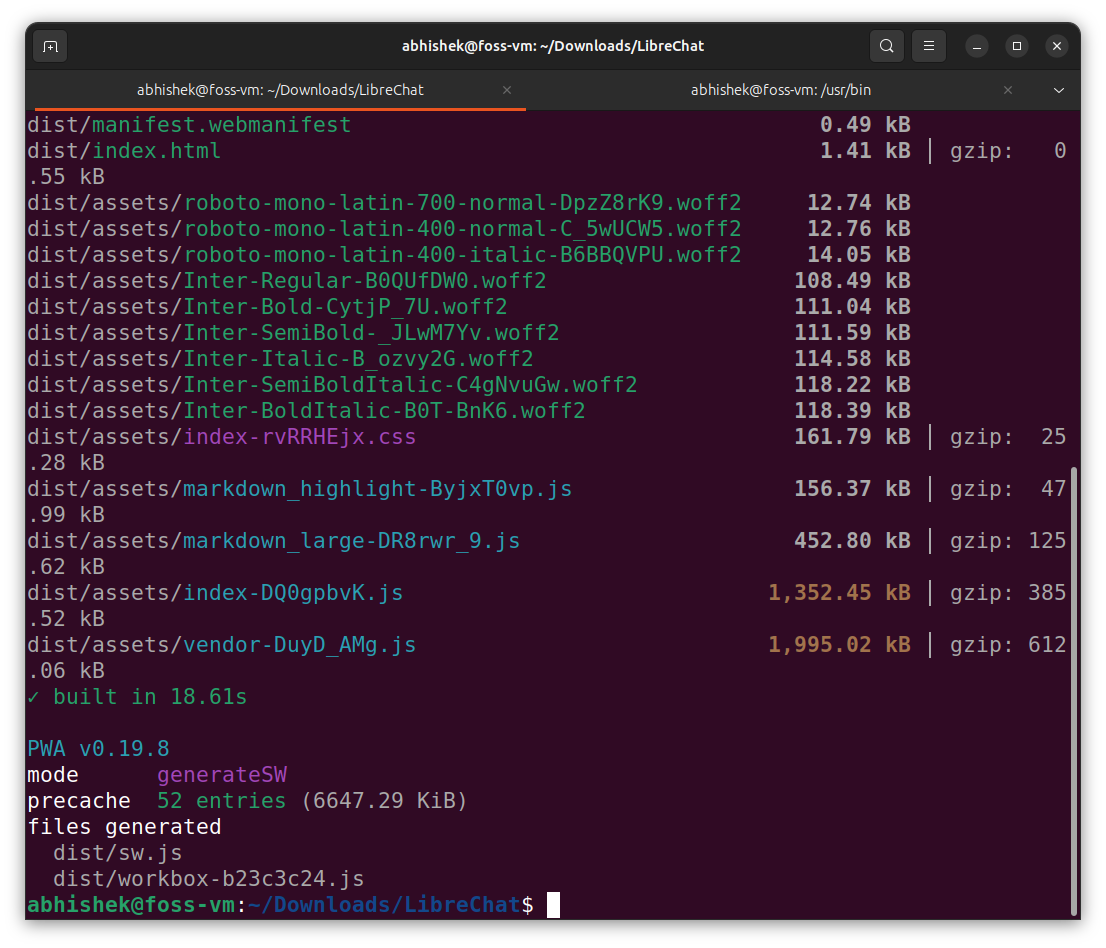

This command will construct the frontend of LibreChat:

npm run frontend

💡

Within the documentation, they straight construct the backend, however throughout testing, I discovered that you just first must run the MongoDB server. In any other case, it will throw errors and will not work in any respect.

MongoDB requires a knowledge listing to retailer its information recordsdata. Thus, create a listing in your system the place you need to retailer the MongoDB information recordsdata (e.g., /path/to/information/listing).

After that, it is advisable to be in the identical listing the place the MongoDB has been put in i.e. /usr/bin then simply sort this command:

./mongod –dbpath=/path/to/information/listing

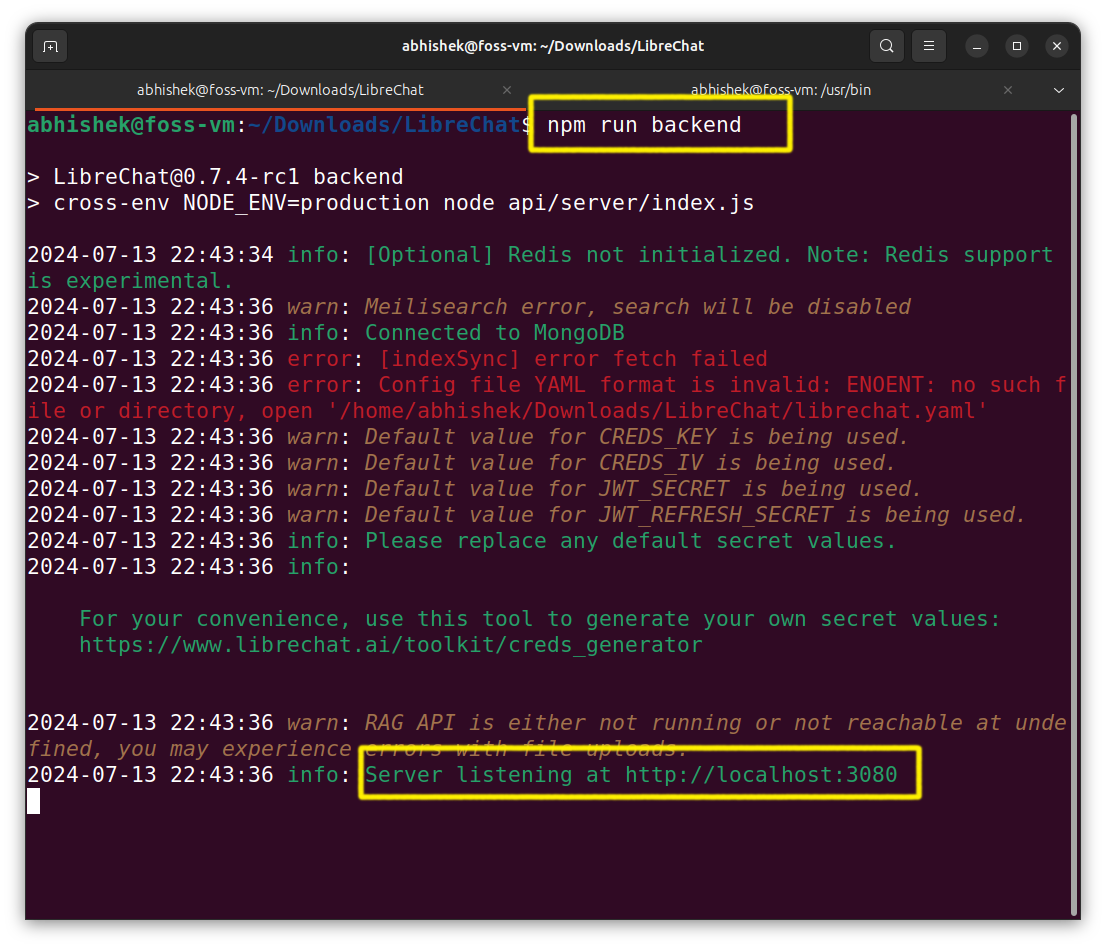

Now you’ll be able to construct the backend (ignore the errors):

npm run backend

You may have efficiently put in LibreChat. You’ll be able to entry it by visitng http://localhost:3080/

Technique 2: Set up LibreChat utilizing Docker

Okay hear me out! This explains my frustration. So, it took me only a one liner command to run LibreChat in Docker. After battling with all of the pop-ups & dependency errors, this was like a stroll within the park.

Please guarantee that you’ve got Git and Docker put in in your system.

The primary few steps will stay the identical like cloning the repository:

git clone https://github.com/danny-avila/LibreChat.git

and creating .env file from .env.instance :

cp .env.instance .env

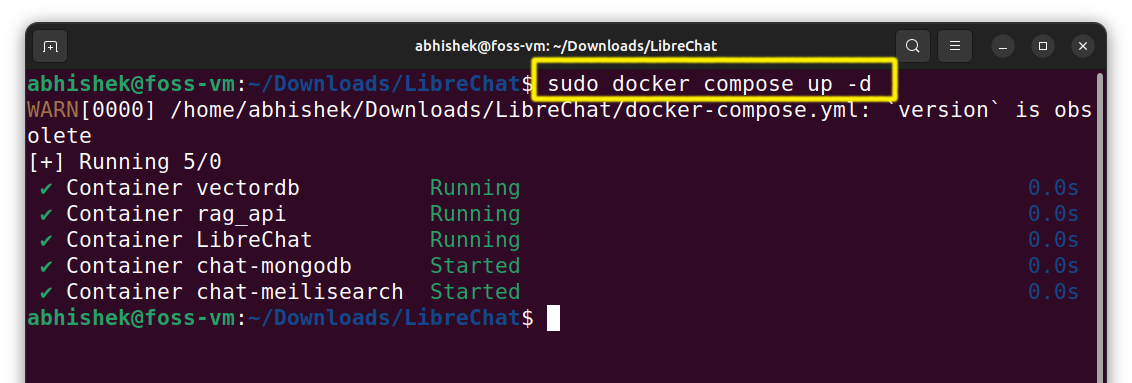

The docker compose file is already offered within the repository that we cloned, thus all we have to do is run our docker:

sudo docker compose up -d

To entry the LibreChat, go to http://localhost:3080/.

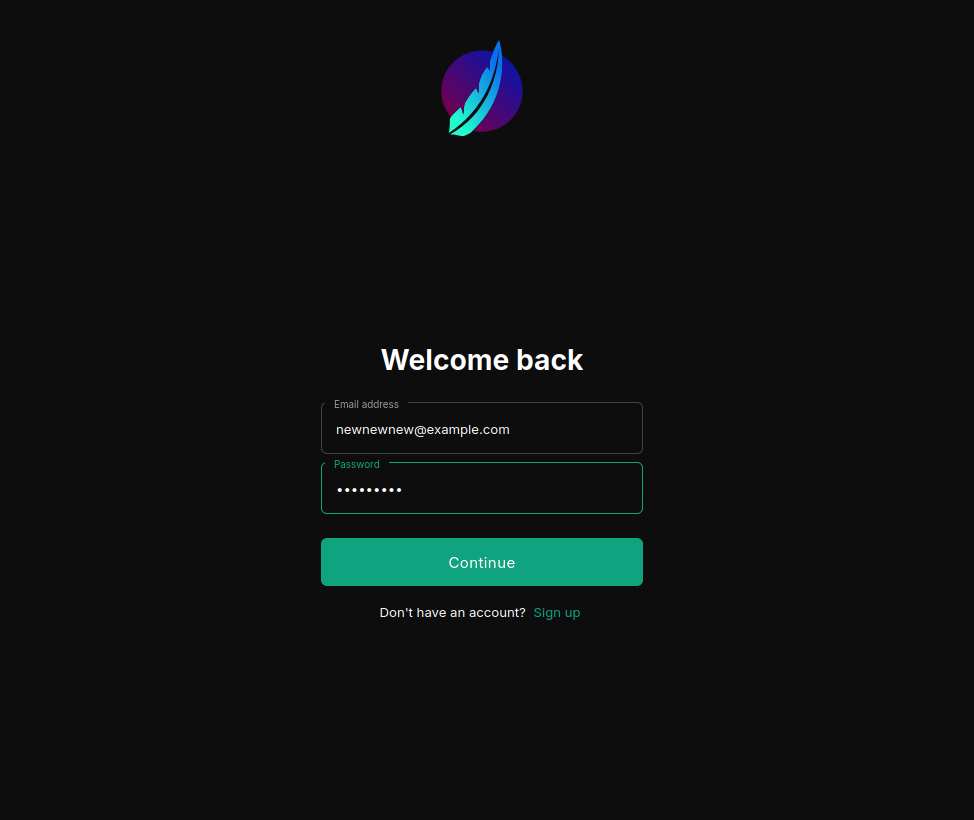

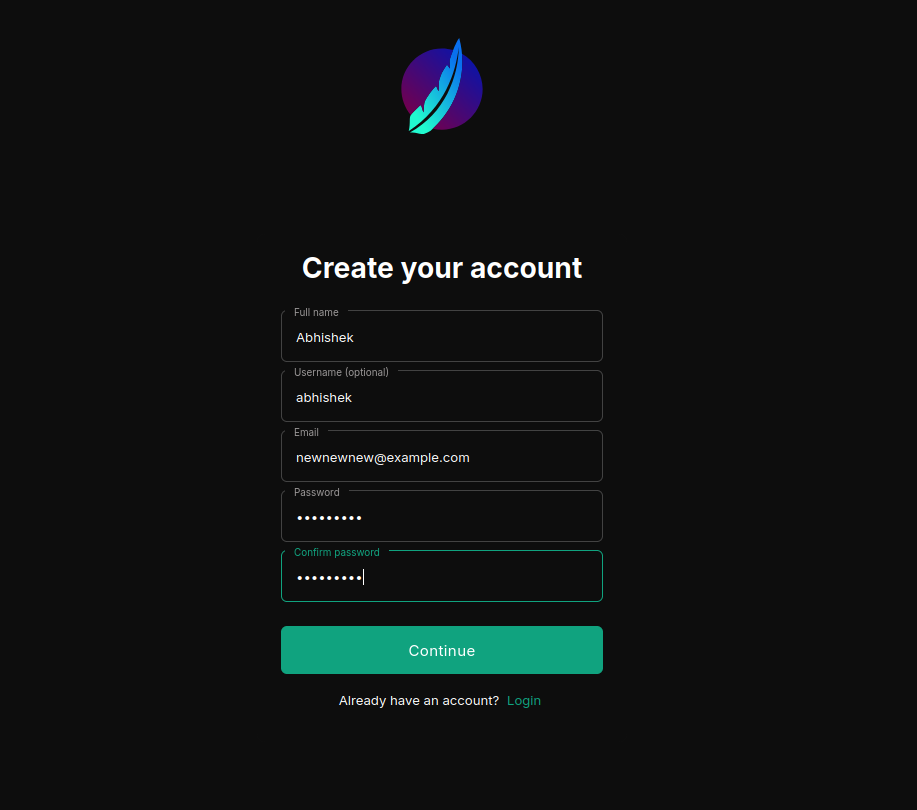

First run of LibreChat

Getting began with LibreChat entails a simple login course of.

You do should signup first to login.

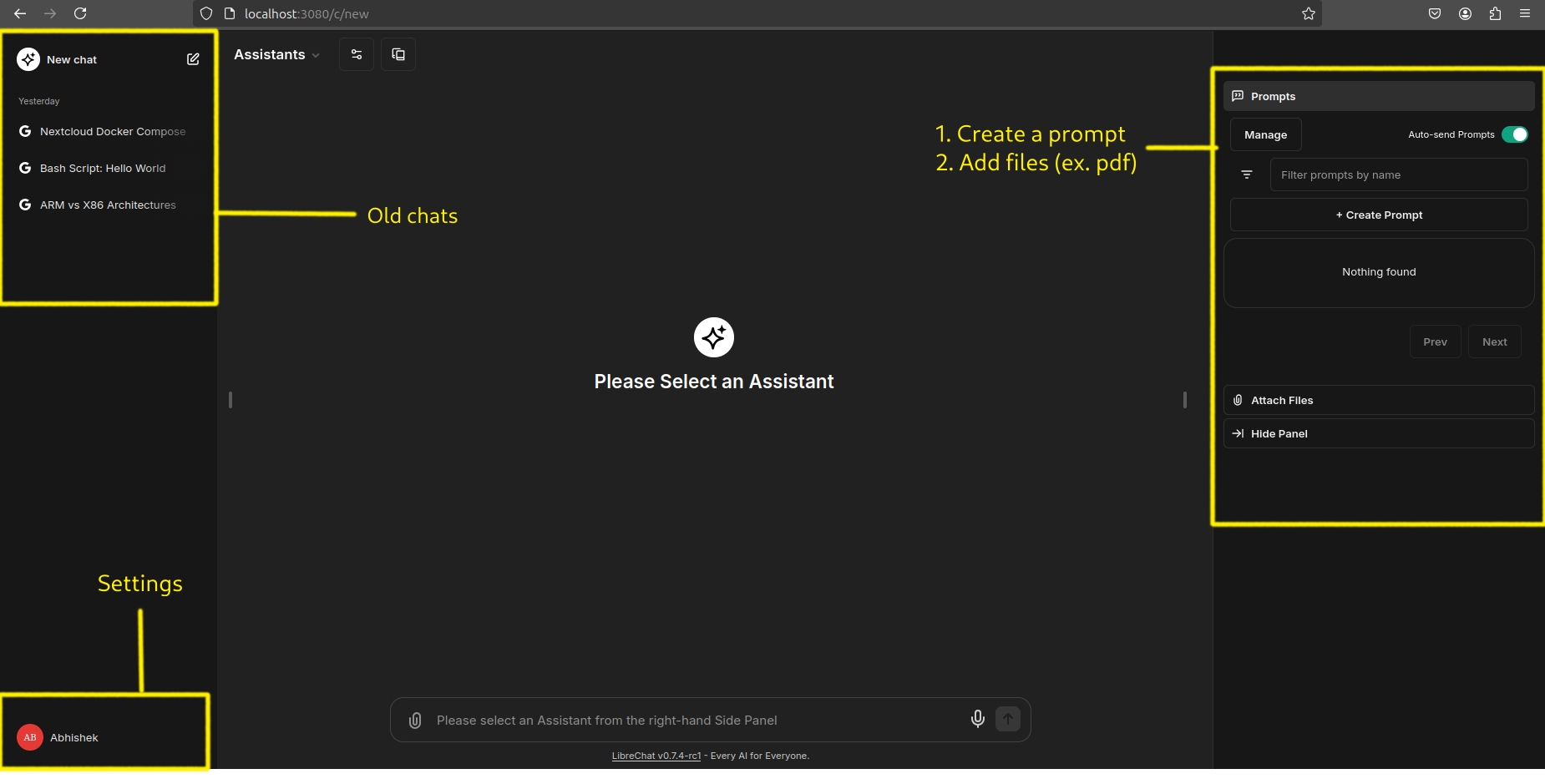

As soon as you’ve got navigated that preliminary step, you are greeted by a minimalist interface that is nearly harking back to ChatGPT’s clear design. It is a no-frills method that places the main focus squarely on the dialog.

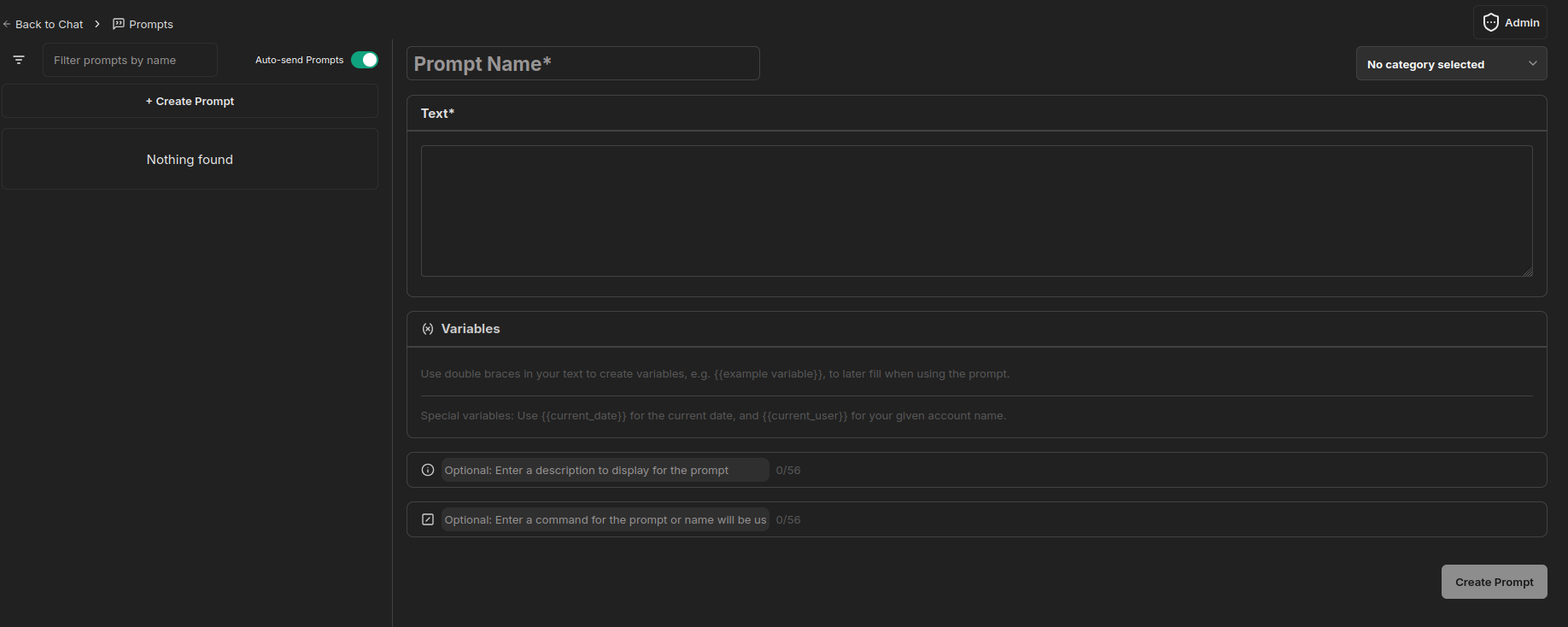

There’s an choice to create customized prompts as properly:

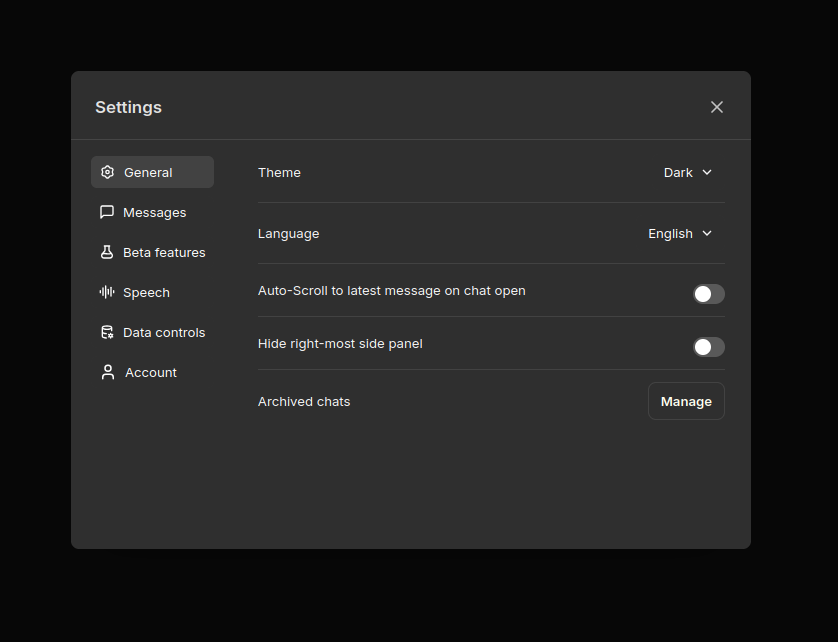

You’ll be able to customise LibreChat to your liking by going to the settings pane:

Whereas to some folks, it may not be as visually hanging as another platforms, the simplicity is refreshing and permits you to dive proper into interacting with the AI with out distractions.

Accessing AI fashions utilizing their API

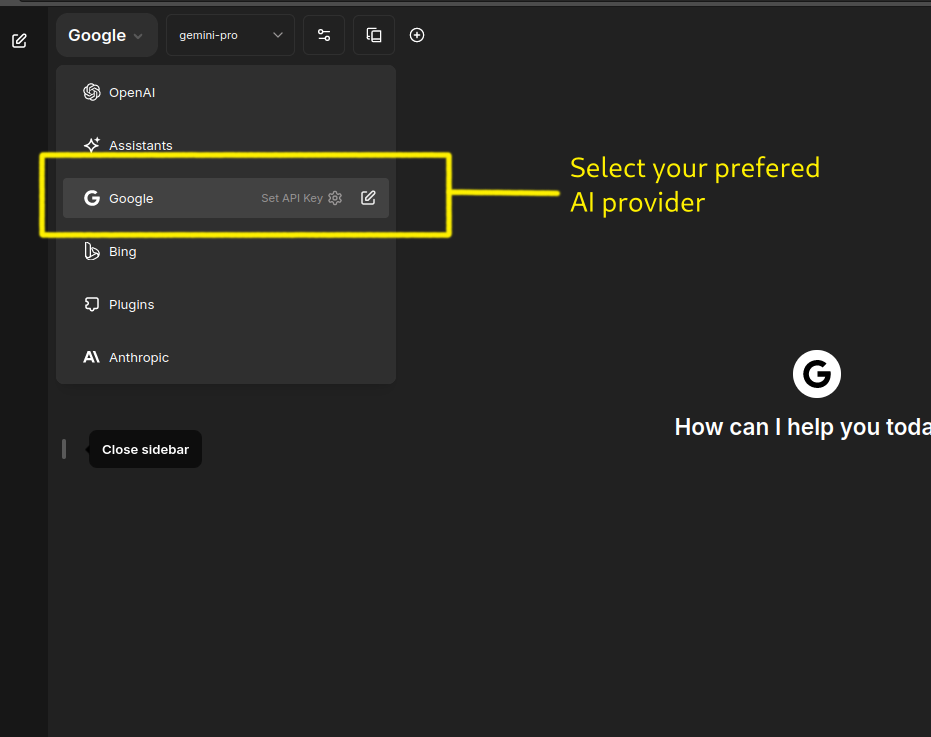

LibreChat operates as a gateway to varied AI fashions. It gives a platform to entry and make the most of the capabilities of fashions from different suppliers like OpenAI’s ChatGPT, Google’s Gemini, and others.

Which means that to completely expertise LibreChat’s potential, you will must have API entry to those exterior AI companies.

For this tutorial, I’ve used Google’s Gemini (free) API to have a dialog with our AI assistant. Sadly, I could not take a look at with OpenAI’s API since throughout testing they flagged my account and banned me.

Getting Google’s API key:

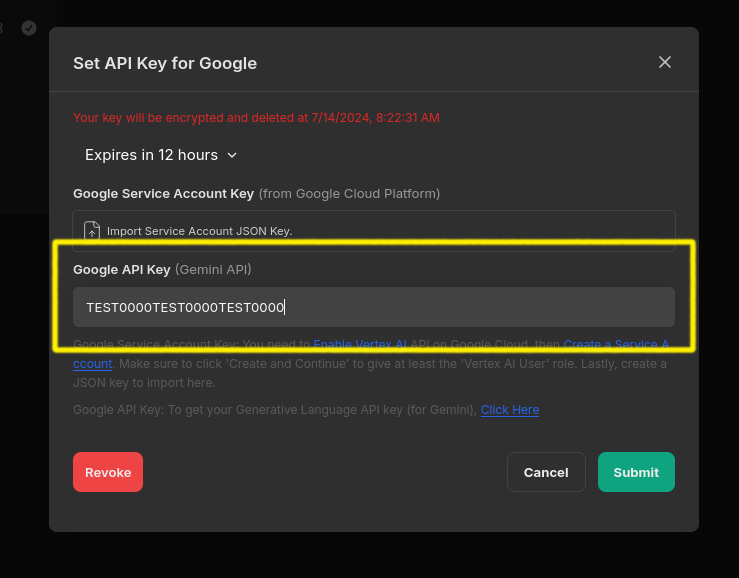

For Google, you’ll be able to both use the Generative Language API (for Gemini fashions), or the Vertex AI API (for Gemini, PaLM2 & Codey fashions).

To make use of Gemini fashions by Google AI Studio, you’ll want an API key. In case you don’t have already got one, create a key in Google AI Studio.

After you have your key, present the important thing in your .env file, which permits all customers of your occasion to make use of it:

GOOGLE_KEY=mY_SeCreT_w9347w8_kEY

Or you’ll be able to enter it through GUI by choosing your prefered AI supplier i.e. Google in our case:

After that, it will immediate you to enter your API key:

Now we’re prepared to speak with our Chat BOT.

Outcomes

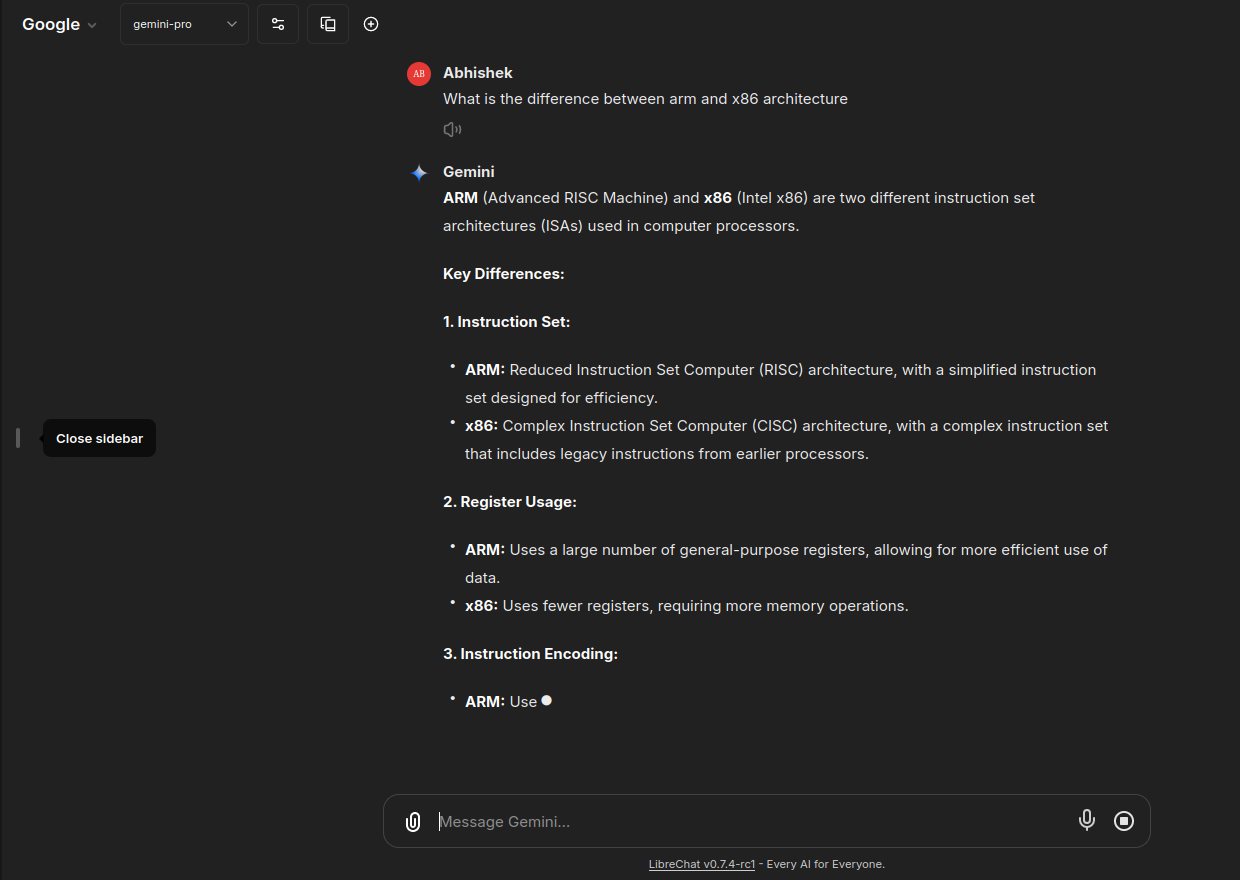

Since LibreChat is basically a wrapper for highly effective AI fashions housed in large information facilities, you’ll be able to count on lightning-fast response occasions and minimal latency.

Listed here are a number of outcomes:

One other one:

As per documentation, LibreChat may combine with Ollama. Which means that when you’ve got Ollama put in in your system, you’ll be able to run native LLMs in LibreChat.

Maybe we’ll have a devoted tutorial on integrating LibreChat and Ollama sooner or later.

Last Ideas

LibreChat presents an intriguing proposition for customers looking for AI interplay. Its open-source nature and skill to leverage a number of AI fashions supply a level of flexibility and potential customization that proprietary platforms may lack.

When it comes to alternate options, LibreChat could possibly be a compelling possibility for Linux customers who may discover Copilot for Home windows to be unique.

Sadly, because of account restrictions, I could not personally take a look at the OpenAI API, a limitation that prevented a extra complete analysis, specifically the “Chat with paperwork” characteristic.

Nonetheless, LibreChat’s potential is simple, and its evolution will likely be fascinating to look at.

In case you have extra open supply AI tasks in thoughts, be at liberty to share with us!

![[SOLVED] ShareFile for Outlook Has Fired an Exception Error [SOLVED] ShareFile for Outlook Has Fired an Exception Error](https://mspoweruser.com/wp-content/uploads/2024/07/sharefile-for-outlook-has-fired-an-exception.png)