Explainability of machine studying (ML) fashions used within the medical area is changing into more and more vital as a result of fashions should be defined from a lot of views in an effort to acquire adoption. These views vary from medical, technological, authorized, and a very powerful perspective—the affected person’s. Fashions developed on textual content within the medical area have grow to be correct statistically, but clinicians are ethically required to judge areas of weak point associated to those predictions in an effort to present the very best take care of particular person sufferers. Explainability of those predictions is required to ensure that clinicians to make the proper selections on a patient-by-patient foundation.

On this put up, we present how one can enhance mannequin explainability in scientific settings utilizing Amazon SageMaker Make clear.

Background

One particular software of ML algorithms within the medical area, which makes use of massive volumes of textual content, is scientific determination help techniques (CDSSs) for triage. Each day, sufferers are admitted to hospitals and admission notes are taken. After these notes are taken, the triage course of is initiated, and ML fashions can help clinicians with estimating scientific outcomes. This can assist scale back operational overhead prices and supply optimum take care of sufferers. Understanding why these selections are urged by the ML fashions is extraordinarily vital for decision-making associated to particular person sufferers.

The aim of this put up is to stipulate how one can deploy predictive fashions with Amazon SageMaker for the needs of triage inside hospital settings and use SageMaker Make clear to elucidate these predictions. The intent is to supply an accelerated path to adoption of predictive methods inside CDSSs for a lot of healthcare organizations.

The pocket book and code from this put up can be found on GitHub. To run it your self, clone the GitHub repository and open the Jupyter pocket book file.

Technical background

A big asset for any acute healthcare group is its scientific notes. On the time of consumption inside a hospital, admission notes are taken. Quite a few current research have proven the predictability of key indicators equivalent to diagnoses, procedures, size of keep, and in-hospital mortality. Predictions of those are actually extremely achievable from admission notes alone, via using pure language processing (NLP) algorithms [1].

Advances in NLP fashions, equivalent to Bi-directional Encoder Representations from Transformers (BERT), have allowed for extremely correct predictions on a corpus of textual content, equivalent to admission notes, that have been beforehand troublesome to get worth from. Their prediction of the scientific indicators is very relevant to be used in a CDSS.

But, in an effort to use the brand new predictions successfully, how these correct BERT fashions are reaching their predictions nonetheless must be defined. There are a number of methods to elucidate the predictions of such fashions. One such method is SHAP (SHapley Additive exPlanations), which is a model-agnostic method for explaining the output of ML fashions.

What’s SHAP

SHAP values are a method for explaining the output of ML fashions. It gives a approach to break down the prediction of an ML mannequin and perceive how a lot every enter function contributes to the ultimate prediction.

SHAP values are primarily based on sport idea, particularly the idea of Shapley values, which have been initially proposed to allocate the payout of a cooperative sport amongst its gamers [2]. Within the context of ML, every function within the enter house is taken into account a participant in a cooperative sport, and the prediction of the mannequin is the payout. SHAP values are calculated by analyzing the contribution of every function to the mannequin prediction for every attainable mixture of options. The typical contribution of every function throughout all attainable function mixtures is then calculated, and this turns into the SHAP worth for that function.

SHAP permits fashions to elucidate predictions with out understanding the mannequin’s internal workings. As well as, there are methods to show these SHAP explanations in textual content, in order that the medical and affected person views can all have intuitive visibility into how algorithms come to their predictions.

With new additions to SageMaker Make clear, and using pre-trained fashions from Hugging Face which are simply used applied in SageMaker, mannequin coaching and explainability can all be simply finished in AWS.

For the aim of an end-to-end instance, we take the scientific consequence of in-hospital mortality and present how this course of might be applied simply in AWS utilizing a pre-trained Hugging Face BERT mannequin, and the predictions shall be defined utilizing SageMaker Make clear.

Decisions of Hugging Face mannequin

Hugging Face gives quite a lot of pre-trained BERT fashions which have been specialised to be used on scientific notes. For this put up, we use the bigbird-base-mimic-mortality mannequin. This mannequin is a fine-tuned model of Google’s BigBird mannequin, particularly tailored for predicting mortality utilizing MIMIC ICU admission notes. The mannequin’s activity is to find out the chance of a affected person not surviving a selected ICU keep primarily based on the admission notes. One of many vital benefits of utilizing this BigBird mannequin is its functionality to course of bigger context lengths, which implies we will enter the entire admission notes with out the necessity for truncation.

Our steps contain deploying this fine-tuned mannequin on SageMaker. We then incorporate this mannequin right into a setup that permits for real-time clarification of its predictions. To realize this degree of explainability, we use SageMaker Make clear.

Answer overview

SageMaker Make clear gives ML builders with purpose-built instruments to realize higher insights into their ML coaching knowledge and fashions. SageMaker Make clear explains each international and native predictions and explains selections made by laptop imaginative and prescient (CV) and NLP fashions.

The next diagram exhibits the SageMaker structure for internet hosting an endpoint that serves explainability requests. It contains interactions between an endpoint, the mannequin container, and the SageMaker Make clear explainer.

Within the pattern code, we use a Jupyter pocket book to showcase the performance. Nevertheless, in a real-world use case, digital well being information (EHRs) or different hospital care functions would instantly invoke the SageMaker endpoint to get the identical response. Within the Jupyter pocket book, we deploy a Hugging Face mannequin container to a SageMaker endpoint. Then we use SageMaker Make clear to elucidate the outcomes that we acquire from the deployed mannequin.

Conditions

You want the next conditions:

Entry the code from the GitHub repository and add it to your pocket book occasion. It’s also possible to run the pocket book in an Amazon SageMaker Studio setting, which is an built-in growth setting (IDE) for ML growth. We advocate utilizing a Python 3 (Information Science) kernel on SageMaker Studio or a conda_python3 kernel on a SageMaker pocket book occasion.

Deploy the mannequin with SageMaker Make clear enabled

As step one, obtain the mannequin from Hugging Face and add it to an Amazon Easy Storage Service (Amazon S3) bucket. Then create a mannequin object utilizing the HuggingFaceModel class. This makes use of a prebuilt container to simplify the method of deploying Hugging Face fashions to SageMaker. You additionally use a customized inference script to do the predictions throughout the container. The next code illustrates the script that’s handed as an argument to the HuggingFaceModel class:

Then you’ll be able to outline the occasion kind that you just deploy this mannequin on:

We then populate ExecutionRoleArn, ModelName and PrimaryContainer fields to create a Mannequin.

Subsequent, create an endpoint configuration by calling the create_endpoint_config API. Right here, you provide the identical model_name used within the create_model API name. The create_endpoint_config now helps the extra parameter ClarifyExplainerConfig to allow the SageMaker Make clear explainer. The SHAP baseline is necessary; you’ll be able to present it both as inline baseline knowledge (the ShapBaseline parameter) or by a S3 baseline file (the ShapBaselineUri parameter). For elective parameters, see the developer information.

Within the following code, we use a particular token because the baseline:

The TextConfig is configured with sentence-level granularity (every sentence is a function, and we want just a few sentences per evaluate for good visualization) and the language as English:

Lastly, after you might have the mannequin and endpoint configuration prepared, use the create_endpoint API to create your endpoint. The endpoint_name should be distinctive inside a Area in your AWS account. The create_endpoint API is synchronous in nature and returns a direct response with the endpoint standing being within the Creating state.

Clarify the prediction

Now that you’ve got deployed the endpoint with on-line explainability enabled, you’ll be able to strive some examples. You may invoke the real-time endpoint utilizing the invoke_endpoint methodology by offering the serialized payload, which on this case is a few pattern admission notes:

Within the first state of affairs, let’s assume that the next medical admission observe was taken by a healthcare employee:

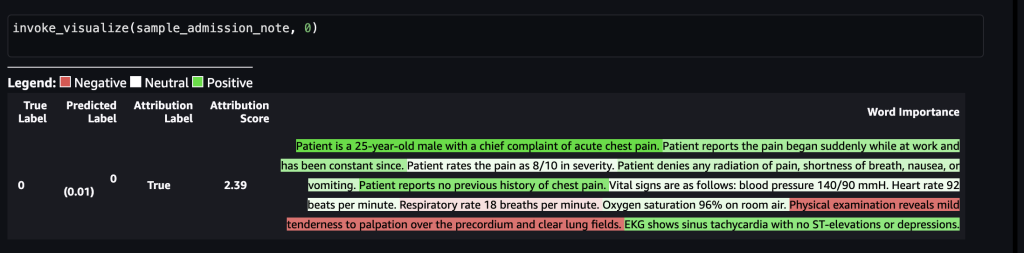

The next screenshot exhibits the mannequin outcomes.

After that is forwarded to the SageMaker endpoint, the label was predicted as 0, which signifies that the danger of mortality is low. In different phrases, 0 implies that the admitted affected person is in non-acute situation in response to the mannequin. Nevertheless, we want the reasoning behind that prediction. For that, you should use the SHAP values because the response. The response contains the SHAP values akin to the phrases of the enter observe, which might be additional color-coded as inexperienced or crimson primarily based on how the SHAP values contribute to the prediction. On this case, we see extra phrases in inexperienced, equivalent to “Affected person experiences no earlier historical past of chest ache” and “EKG exhibits sinus tachycardia with no ST-elevations or depressions,” versus crimson, aligning with the mortality prediction of 0.

Within the second state of affairs, let’s assume that the next medical admission observe was taken by a healthcare employee:

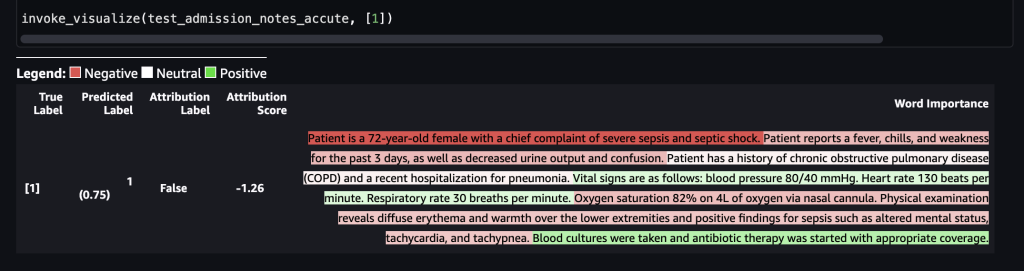

The next screenshot exhibits our outcomes.

After that is forwarded to the SageMaker endpoint, the label was predicted as 1, which signifies that the danger of mortality is excessive. This means that the admitted affected person is in acute situation in response to the mannequin. Nevertheless, we want the reasoning behind that prediction. Once more, you should use the SHAP values because the response. The response contains the SHAP values akin to the phrases of the enter observe, which might be additional color-coded. On this case, we see extra phrases in crimson, equivalent to “Affected person experiences a fever, chills, and weak point for the previous 3 days, in addition to decreased urine output and confusion” and “Affected person is a 72-year-old feminine with a chief criticism of extreme sepsis shock,” versus inexperienced, aligning with the mortality prediction of 1.

The scientific care group can use these explanations to help of their selections on the care course of for every particular person affected person.

Clear up

To scrub up the sources which have been created as a part of this answer, run the next statements:

Conclusion

This put up confirmed you how one can use SageMaker Make clear to elucidate selections in a healthcare use case primarily based on the medical notes captured throughout varied levels of triage course of. This answer might be built-in into present determination help techniques to offer one other knowledge level to clinicians as they consider sufferers for admission into the ICU. To be taught extra about utilizing AWS providers within the healthcare trade, take a look at the next weblog posts:

References

[1] https://aclanthology.org/2021.eacl-main.75/

[2] https://arxiv.org/pdf/1705.07874.pdf

Concerning the authors

Shamika Ariyawansa, serving as a Senior AI/ML Options Architect within the International Healthcare and Life Sciences division at Amazon Internet Providers (AWS), has a eager concentrate on Generative AI. He assists prospects in integrating Generative AI into their initiatives, emphasizing the significance of explainability inside their AI-driven initiatives. Past his skilled commitments, Shamika passionately pursues snowboarding and off-roading adventures.”

Shamika Ariyawansa, serving as a Senior AI/ML Options Architect within the International Healthcare and Life Sciences division at Amazon Internet Providers (AWS), has a eager concentrate on Generative AI. He assists prospects in integrating Generative AI into their initiatives, emphasizing the significance of explainability inside their AI-driven initiatives. Past his skilled commitments, Shamika passionately pursues snowboarding and off-roading adventures.”

Ted Spencer is an skilled Options Architect with in depth acute healthcare expertise. He’s enthusiastic about making use of machine studying to unravel new use circumstances, and rounds out options with each the tip shopper and their enterprise/scientific context in thoughts. He lives in Toronto Ontario, Canada, and enjoys touring together with his household and coaching for triathlons as time permits.

Ted Spencer is an skilled Options Architect with in depth acute healthcare expertise. He’s enthusiastic about making use of machine studying to unravel new use circumstances, and rounds out options with each the tip shopper and their enterprise/scientific context in thoughts. He lives in Toronto Ontario, Canada, and enjoys touring together with his household and coaching for triathlons as time permits.

Ram Pathangi is a Options Architect at AWS supporting healthcare and life sciences prospects within the San Francisco Bay Space. He has helped prospects in finance, healthcare, life sciences, and hi-tech verticals run their enterprise efficiently on the AWS Cloud. He makes a speciality of Databases, Analytics, and Machine Studying.

Ram Pathangi is a Options Architect at AWS supporting healthcare and life sciences prospects within the San Francisco Bay Space. He has helped prospects in finance, healthcare, life sciences, and hi-tech verticals run their enterprise efficiently on the AWS Cloud. He makes a speciality of Databases, Analytics, and Machine Studying.