Why it issues: Nvidia holds an estimated 75 to 90 % of the AI chip market. Regardless of its market dominance, rivals have continued creating {hardware} and accelerators to chip away on the firm’s AI empire. Microsoft drew the curiosity of AI professionals and fanatics after outlining its design for a customized accelerator.

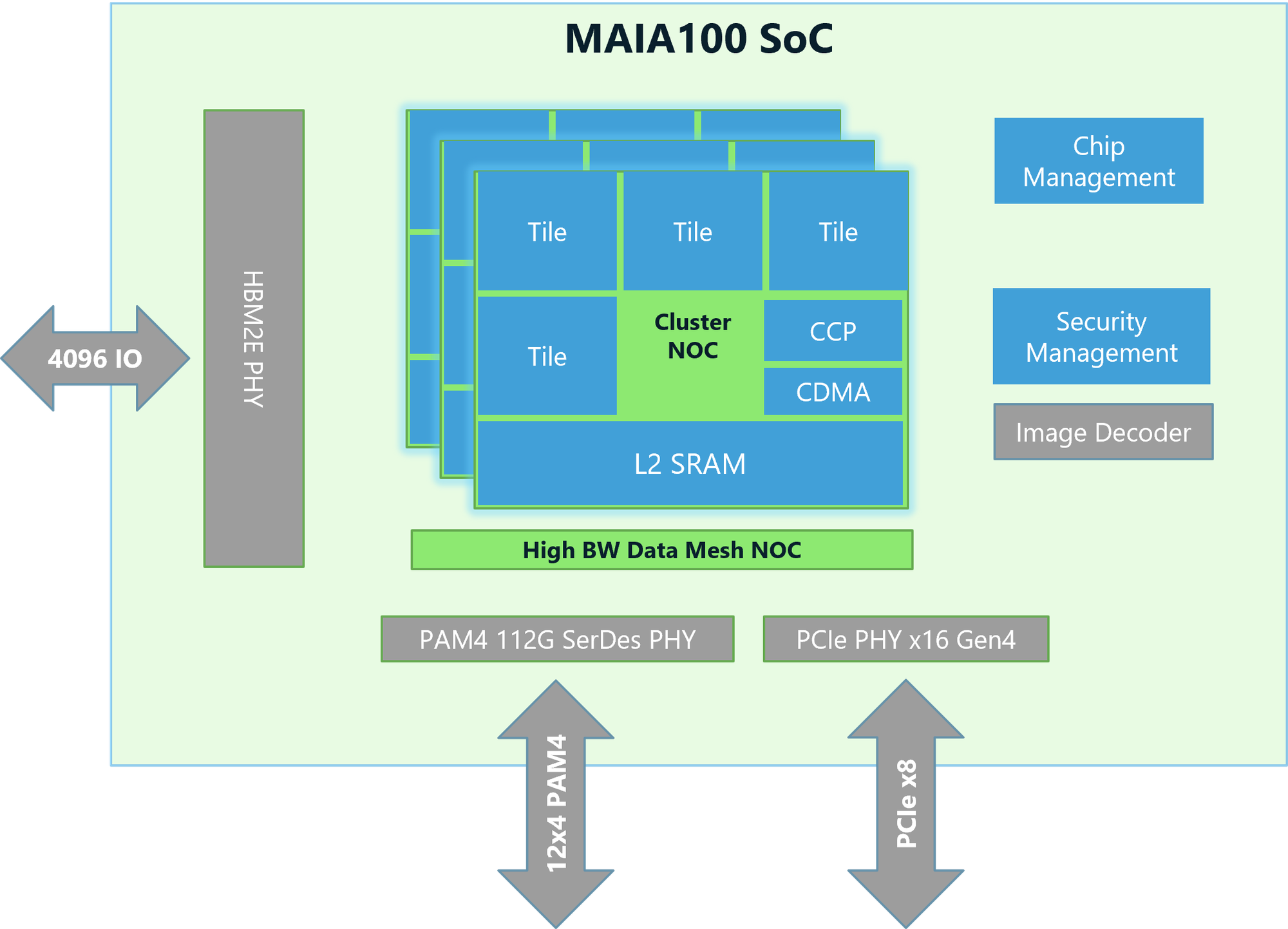

Microsoft launched its first AI accelerator, Maia 100, at this yr’s Scorching Chips convention. It options an structure that makes use of customized server boards, racks, and software program to offer cost-effective, enhanced options and efficiency for AI-based workloads. Remond designed the customized accelerator to run OpenAI fashions in Azure information heart environments.

The chips are constructed on TSMC’s 5nm course of node and are provisioned as 500w elements however can help as much as a 700w TDP. Maia’s design can ship excessive ranges of total efficiency whereas effectively managing its focused workload’s total energy draw. The accelerator additionally options 64GB of HBM2E, a step down from the Nvidia H100’s 80GB and the B200’s 192GB of HBM3E.

In keeping with Microsoft’s Scorching Chips presentation and a current weblog put up, the Maia 100 SoC structure incorporates a high-speed tensor unit (16xRx16) providing speedy processing for coaching and inferencing whereas supporting a variety of knowledge varieties, together with low precision varieties resembling Microsoft’s MX format. It has a loosely coupled superscalar engine (vector processor) constructed with customized ISA to help information varieties, together with FP32 and BF16, a Direct Reminiscence Entry engine supporting completely different tensor sharding schemes, and {hardware} semaphores that allow asynchronous programming.

The Maia 100 AI accelerator additionally offers builders with the Maia SDK. The equipment contains instruments enabling AI builders to rapidly port fashions beforehand written in Pytorch and Triton. The SDK contains framework integration, developer instruments, two programming fashions, and compilers. It additionally has optimized compute and communication kernels, the Maia Host/Gadget Runtime, a {hardware} abstraction layer supporting reminiscence allocation kernel launches, scheduling, and system administration.

Microsoft posted extra data on the SDK, Maia’s backend community protocol, and optimization in its Inside Maia 100 weblog put up. It makes a great learn for builders and AI fanatics.