Digital publishers are repeatedly in search of methods to streamline and automate their media workflows with a purpose to generate and publish new content material as quickly as they will.

Publishers can have repositories containing hundreds of thousands of photos and with a purpose to lower your expenses, they want to have the ability to reuse these photos throughout articles. Discovering the picture that finest matches an article in repositories of this scale could be a time-consuming, repetitive, guide job that may be automated. It additionally depends on the photographs within the repository being tagged appropriately, which will also be automated (for a buyer success story, confer with Aller Media Finds Success with KeyCore and AWS).

On this publish, we display how you can use Amazon Rekognition, Amazon SageMaker JumpStart, and Amazon OpenSearch Service to unravel this enterprise downside. Amazon Rekognition makes it simple so as to add picture evaluation functionality to your purposes with none machine studying (ML) experience and comes with numerous APIs to fulfil use circumstances corresponding to object detection, content material moderation, face detection and evaluation, and textual content and movie star recognition, which we use on this instance. SageMaker JumpStart is a low-code service that comes with pre-built options, instance notebooks, and plenty of state-of-the-art, pre-trained fashions from publicly out there sources which are simple to deploy with a single click on into your AWS account. These fashions have been packaged to be securely and simply deployable by way of Amazon SageMaker APIs. The brand new SageMaker JumpStart Basis Hub lets you simply deploy giant language fashions (LLM) and combine them together with your purposes. OpenSearch Service is a totally managed service that makes it easy to deploy, scale, and function OpenSearch. OpenSearch Service lets you retailer vectors and different knowledge varieties in an index, and affords wealthy performance that lets you seek for paperwork utilizing vectors and measuring the semantical relatedness, which we use on this publish.

The top objective of this publish is to point out how we will floor a set of photos which are semantically much like some textual content, be that an article or television synopsis.

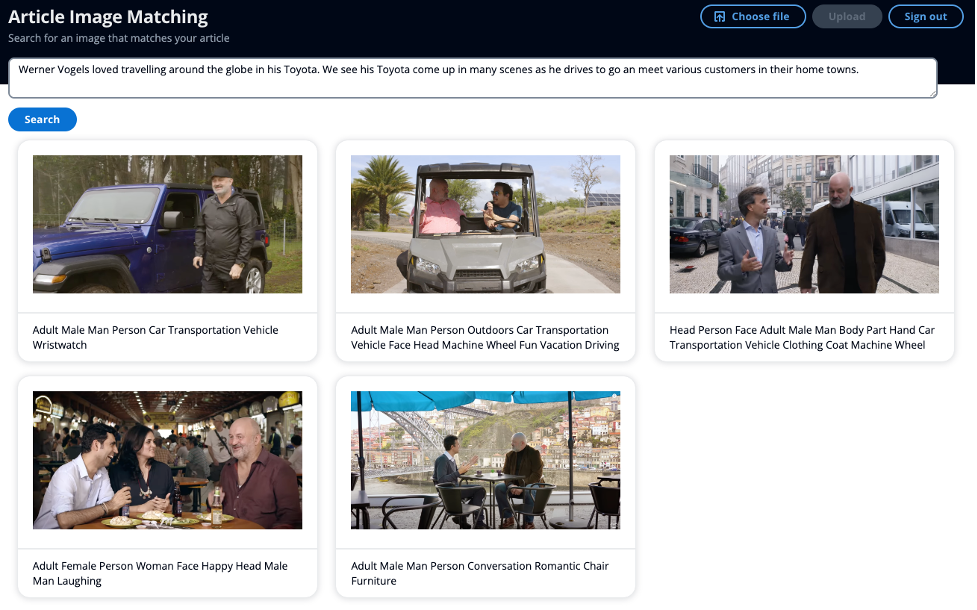

The next screenshot reveals an instance of taking a mini article as your search enter, somewhat than utilizing key phrases, and having the ability to floor semantically related photos.

Overview of answer

The answer is split into two major sections. First, you extract label and movie star metadata from the photographs, utilizing Amazon Rekognition. You then generate an embedding of the metadata utilizing a LLM. You retailer the movie star names, and the embedding of the metadata in OpenSearch Service. Within the second major part, you may have an API to question your OpenSearch Service index for photos utilizing OpenSearch’s clever search capabilities to seek out photos which are semantically much like your textual content.

This answer makes use of our event-driven providers Amazon EventBridge, AWS Step Features, and AWS Lambda to orchestrate the method of extracting metadata from the photographs utilizing Amazon Rekognition. Amazon Rekognition will carry out two API calls to extract labels and recognized celebrities from the picture.

Amazon Rekognition movie star detection API, returns quite a few parts within the response. For this publish, you employ the next:

Identify, Id, and Urls – The movie star title, a singular Amazon Rekognition ID, and listing of URLs such because the movie star’s IMDb or Wikipedia hyperlink for additional data.

MatchConfidence – A match confidence rating that can be utilized to manage API habits. We advocate making use of an acceptable threshold to this rating in your software to decide on your most well-liked working level. For instance, by setting a threshold of 99%, you possibly can eradicate extra false positives however might miss some potential matches.

In your second API name, Amazon Rekognition label detection API, returns quite a few parts within the response. You utilize the next:

Identify – The title of the detected label

Confidence – The extent of confidence within the label assigned to a detected object

A key idea in semantic search is embeddings. A phrase embedding is a numerical illustration of a phrase or group of phrases, within the type of a vector. When you may have many vectors, you possibly can measure the gap between them, and vectors that are shut in distance are semantically related. Due to this fact, if you happen to generate an embedding of your entire photos’ metadata, after which generate an embedding of your textual content, be that an article or television synopsis for instance, utilizing the identical mannequin, you possibly can then discover photos that are semantically much like your given textual content.

There are a lot of fashions out there inside SageMaker JumpStart to generate embeddings. For this answer, you employ GPT-J 6B Embedding from Hugging Face. It produces high-quality embeddings and has one of many high efficiency metrics based on Hugging Face’s analysis outcomes. Amazon Bedrock is another choice, nonetheless in preview, the place you would select Amazon Titan Textual content Embeddings mannequin to generate the embeddings.

You utilize the GPT-J pre-trained mannequin from SageMaker JumpStart to create an embedding of the picture metadata and retailer this as a k-NN vector in your OpenSearch Service index, together with the movie star title in one other subject.

The second a part of the answer is to return the highest 10 photos to the consumer which are semantically much like their textual content, be this an article or television synopsis, together with any celebrities if current. When selecting a picture to accompany an article, you need the picture to resonate with the pertinent factors from the article. SageMaker JumpStart hosts many summarization fashions which might take a protracted physique of textual content and scale back it to the details from the unique. For the summarization mannequin, you employ the AI21 Labs Summarize mannequin. This mannequin supplies high-quality recaps of reports articles and the supply textual content can include roughly 10,000 phrases, which permits the consumer to summarize your complete article in a single go.

To detect if the textual content comprises any names, doubtlessly recognized celebrities, you employ Amazon Comprehend which might extract key entities from a textual content string. You then filter by the Individual entity, which you employ as an enter search parameter.

You then take the summarized article and generate an embedding to make use of as one other enter search parameter. It’s essential to notice that you just use the identical mannequin deployed on the identical infrastructure to generate the embedding of the article as you probably did for the photographs. You then use Precise k-NN with scoring script to be able to search by two fields: movie star names and the vector that captured the semantic data of the article. Consult with this publish, Amazon OpenSearch Service’s vector database capabilities defined, on the scalability of Rating script and the way this method on giant indexes might result in excessive latencies.

Walkthrough

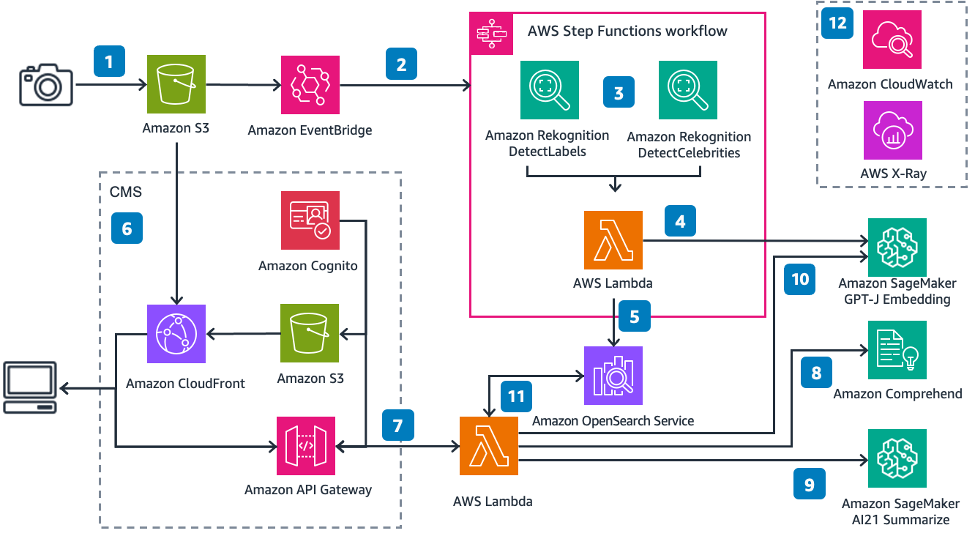

The next diagram illustrates the answer structure.

Following the numbered labels:

You add a picture to an Amazon S3 bucket

Amazon EventBridge listens to this occasion, after which triggers an AWS Step perform execution

The Step Operate takes the picture enter, extracts the label and movie star metadata

The AWS Lambda perform takes the picture metadata and generates an embedding

The Lambda perform then inserts the movie star title (if current) and the embedding as a k-NN vector into an OpenSearch Service index

Amazon S3 hosts a easy static web site, served by an Amazon CloudFront distribution. The front-end consumer interface (UI) lets you authenticate with the applying utilizing Amazon Cognito to seek for photos

You submit an article or some textual content by way of the UI

One other Lambda perform calls Amazon Comprehend to detect any names within the textual content

The perform then summarizes the textual content to get the pertinent factors from the article

The perform generates an embedding of the summarized article

The perform then searches OpenSearch Service picture index for any picture matching the movie star title and the k-nearest neighbors for the vector utilizing cosine similarity

Amazon CloudWatch and AWS X-Ray offer you observability into the top to finish workflow to warn you of any points.

Extract and retailer key picture metadata

The Amazon Rekognition DetectLabels and RecognizeCelebrities APIs provide the metadata out of your photos—textual content labels you should use to kind a sentence to generate an embedding from. The article offers you a textual content enter that you should use to generate an embedding.

Generate and retailer phrase embeddings

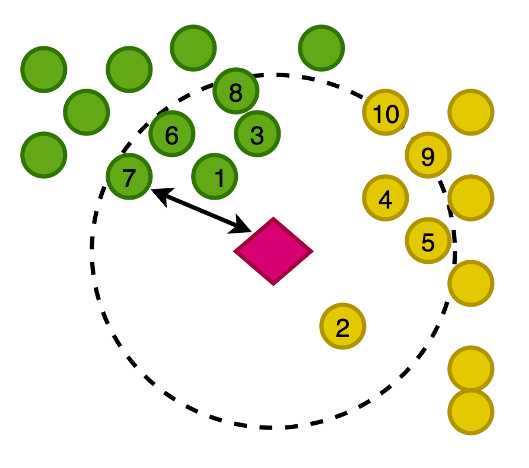

The next determine demonstrates plotting the vectors of our photos in a 2-dimensional house, the place for visible support, we now have categorized the embeddings by their major class.

You additionally generate an embedding of this newly written article, to be able to search OpenSearch Service for the closest photos to the article on this vector house. Utilizing the k-nearest neighbors (k-NN) algorithm, you outline what number of photos to return in your outcomes.

Zoomed in to the previous determine, the vectors are ranked based mostly on their distance from the article after which return the Okay-nearest photos, the place Okay is 10 on this instance.

OpenSearch Service affords the potential to retailer giant vectors in an index, and likewise affords the performance to run queries in opposition to the index utilizing k-NN, such that you could question with a vector to return the k-nearest paperwork which have vectors in shut distance utilizing numerous measurements. For this instance, we use cosine similarity.

Detect names within the article

You utilize Amazon Comprehend, an AI pure language processing (NLP) service, to extract key entities from the article. On this instance, you employ Amazon Comprehend to extract entities and filter by the entity Individual, which returns any names that Amazon Comprehend can discover within the journalist story, with just some strains of code:

On this instance, you add a picture to Amazon Easy Storage Service (Amazon S3), which triggers a workflow the place you might be extracting metadata from the picture together with labels and any celebrities. You then rework that extracted metadata into an embedding and retailer all of this knowledge in OpenSearch Service.

Summarize the article and generate an embedding

Summarizing the article is a vital step to guarantee that the phrase embedding is capturing the pertinent factors of the article, and due to this fact returning photos that resonate with the theme of the article.

AI21 Labs Summarize mannequin could be very easy to make use of with none immediate and just some strains of code:

You then use the GPT-J mannequin to generate the embedding

You then search OpenSearch Service in your photos

The next is an instance snippet of that question:

The structure comprises a easy net app to characterize a content material administration system (CMS).

For an instance article, we used the next enter:

“Werner Vogels liked travelling across the globe in his Toyota. We see his Toyota come up in lots of scenes as he drives to go and meet numerous clients of their dwelling cities.”

Not one of the photos have any metadata with the phrase “Toyota,” however the semantics of the phrase “Toyota” are synonymous with automobiles and driving. Due to this fact, with this instance, we will display how we will transcend key phrase search and return photos which are semantically related. Within the above screenshot of the UI, the caption underneath the picture reveals the metadata Amazon Rekognition extracted.

You possibly can embody this answer in a bigger workflow the place you employ the metadata you already extracted out of your photos to begin utilizing vector search together with different key phrases, corresponding to movie star names, to return the most effective resonating photos and paperwork in your search question.

Conclusion

On this publish, we confirmed how you should use Amazon Rekognition, Amazon Comprehend, SageMaker, and OpenSearch Service to extract metadata out of your photos after which use ML strategies to find them robotically utilizing movie star and semantic search. That is notably essential throughout the publishing trade, the place pace issues in getting recent content material out shortly and to a number of platforms.

For extra details about working with media belongings, confer with Media intelligence simply bought smarter with Media2Cloud 3.0.

Concerning the Writer

Mark Watkins is a Options Architect throughout the Media and Leisure crew, supporting his clients resolve many knowledge and ML issues. Away from skilled life, he loves spending time along with his household and watching his two little ones rising up.

Mark Watkins is a Options Architect throughout the Media and Leisure crew, supporting his clients resolve many knowledge and ML issues. Away from skilled life, he loves spending time along with his household and watching his two little ones rising up.

/cdn.vox-cdn.com/uploads/chorus_asset/file/25661290/Screenshot_2024_10_06_at_10.48.36_AM.png)