A fast-start tutorial

The panorama of synthetic intelligence (AI), significantly in Generative AI, has seen vital developments not too long ago. Massive Language Fashions (LLMs) have been actually transformative on this regard. One standard method to constructing an LLM software is Retrieval Augmented Technology (RAG), which mixes the power to leverage a corporation’s knowledge with the generative capabilities of those LLMs. Brokers are a well-liked and helpful strategy to introduce autonomous behaviour into LLM functions.

What’s Agentic RAG?

Agentic RAG represents a complicated evolution in AI methods, the place autonomous brokers make the most of RAG methods to boost their decision-making and response skills. Not like conventional RAG fashions, which frequently depend on person enter to set off actions, agentic RAG methods undertake a proactive method. These brokers autonomously hunt down related data, analyse it and use it to generate responses or take particular actions. An agent is supplied with a set of instruments and may judiciously choose and use the suitable instruments for the given downside.

This proactive behaviour is especially helpful in lots of use circumstances similar to customer support, analysis help, and sophisticated problem-solving situations. By integrating the generative functionality of LLMs with superior retrieval methods agentic RAG affords a way more efficient AI resolution.

Key Options of RAG Utilizing Brokers

1.Process Decomposition:

Brokers can break down complicated duties into manageable subtasks, dealing with retrieval and technology step-by-step. This method enhances the coherence and relevance of the ultimate output.

2. Contextual Consciousness:

RAG brokers preserve contextual consciousness all through interactions, guaranteeing that retrieved data aligns with the continued dialog or job. This results in extra coherent and contextually applicable responses.

3. Versatile Retrieval Methods:

Brokers can adapt their retrieval methods based mostly on the context, similar to switching between dense and sparse retrieval or using hybrid approaches. This optimization balances relevance and pace.

4. Suggestions Loops:

Brokers usually incorporate mechanisms to make use of person suggestions for refining future retrievals and generations, which is essential for functions that require steady studying and adaptation.

5. Multi-Modal Capabilities:

Superior RAG brokers are beginning to help multi-modal capabilities, dealing with and producing content material throughout numerous media sorts (textual content, photographs, movies). This versatility is helpful for numerous use circumstances.

6. Scalability:

The agent structure permits RAG methods to scale effectively, managing large-scale retrievals whereas sustaining content material high quality, making them appropriate for enterprise-level functions.

7.Explainability:

Some RAG brokers are designed to supply explanations for his or her selections, significantly in high-stakes functions, enhancing belief and transparency within the system’s outputs.

This weblog submit is a getting-started tutorial which guides the person by constructing an agentic RAG system utilizing Langchain with IBM Watsonx.ai (each for embedding and generative capabilities) and Milvus vector database service offered by IBM Watsonx.knowledge (for storing the vectorized data chunks). For this tutorial, now we have created a ReAct agent.

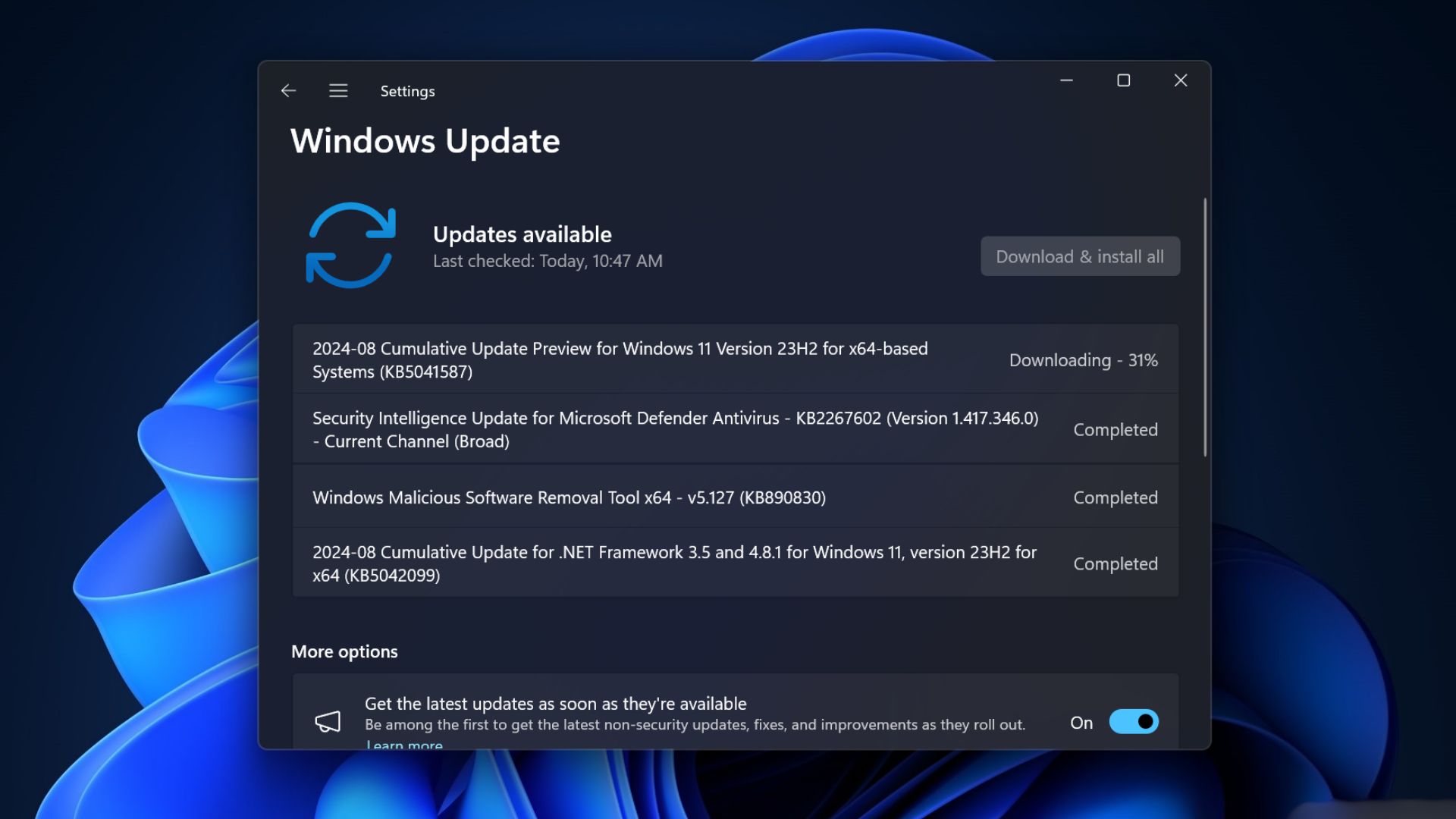

Step 1: Bundle set up

Allow us to first set up the required Python packages. These embrace Langchain, IBM Watson integrations, milvus integration packages, and BeautifulSoup4 for internet scraping.

%pip set up langchainpercentpip set up langchain_ibmpercentpip set up BeautifulSoup4percentpip set up langchain_communitypercentpip set up langgraphpercentpip set up pymilvuspercentpip set up langchain_milvus

Step 2: Imports

Subsequent we import the required libraries to arrange the setting and configure our LLM.

import bs4from Langchain.instruments.retriever import create_retriever_toolfrom Langchain_community.document_loaders import WebBaseLoaderfrom Langchain_core.chat_history import BaseChatMessageHistoryfrom Langchain_core.prompts import ChatPromptTemplatefrom Langchain_text_splitters import CharacterTextSplitterfrom pymilvus import MilvusClient, DataTypeimport os, re

Right here, we’re importing modules for internet scraping, chat historical past, textual content splitting, and vector storage (milvus)

Step 3: Configuring setting variables

We have to arrange setting variables for IBM Watsonx, which can be used to entry the LLM which is offered by Watsonx.ai

os.environ[“WATSONX_APIKEY”] = “<Your_API_Key>”os.environ[“PROJECT_ID”] = “<Your_Project_ID>”os.environ[“GRPC_DNS_RESOLVER”] = “<Your_DNS_Resolver>”

Please make certain to switch the placeholder values together with your precise credentials.

Step 4: Initializing Watsonx LLM

With the setting arrange, we initialize the IBM Watsonx LLM with particular parameters to regulate the technology course of. We’re utilizing the ChatWatsonx class right here with mistralai/mixtral-8x7b-instruct-v01 mannequin from watsonx.ai.

from Langchain_ibm import ChatWatsonx

llm = ChatWatsonx(model_id=”mistralai/mixtral-8x7b-instruct-v01″,url=”https://us-south.ml.cloud.ibm.com”,project_id=os.getenv(“PROJECT_ID”),params={“decoding_method”: “pattern”,”max_new_tokens”: 5879,”min_new_tokens”: 2,”temperature”: 0,”top_k”: 50,”top_p”: 1,})

This configuration units up the LLM for textual content technology. We are able to tweak the inference parameters right here for producing desired responses. Extra details about mannequin inference parameters and their permissible values right here

Step 5: Loading and splitting paperwork

We load the paperwork from an internet web page and break up them into chunks to facilitate environment friendly retrieval. The chunks generated are saved within the milvus occasion that now we have provisioned.

loader = WebBaseLoader(web_paths=(“https://lilianweng.github.io/posts/2023-06-23-agent/”,),bs_kwargs=dict(parse_only=bs4.SoupStrainer(class_=(“post-content”, “post-title”, “post-header”))),)docs = loader.load()text_splitter = CharacterTextSplitter(chunk_size=1500, chunk_overlap=200)splits = text_splitter.split_documents(docs)

This code scrapes content material from a specified internet web page, then splits the content material into smaller segments, which can later be listed for retrieval.

Disclaimer: Now we have confirmed that this website permits scraping, but it surely’s vital to all the time double-check the location’s permissions earlier than scraping. Web sites can replace their insurance policies, so guarantee your actions adjust to their phrases of use and related legal guidelines.

Step 6: Establishing the retriever

We set up a connection to Milvus to retailer the doc embeddings and allow quick retrieval.

from AdpativeClient import InMemoryMilvusStrategy, RemoteMilvusStrategy, BasicRAGHandler

def adapt(number_of_files=0, total_file_size=0, data_size_in_kbs=0.0):technique = InMemoryMilvusStrategy()if(number_of_files > 10 or total_file_size > 10 or data_size_in_kbs > 0.25):technique = RemoteMilvusStrategy()shopper = technique.join()return shopper

shopper = adapt(total_size_kb)handler = BasicRAGHandler(shopper)retriever = handler.create_index(splits)

This operate decides whether or not to make use of an in-memory or distant Milvus occasion based mostly on the dimensions of the info, guaranteeing scalability and effectivity.

BasicRAGHandler class covers the next functionalities at a excessive stage:

· Initializes the handler with a Milvus shopper, permitting interplay with the Milvus vector database provisioned by IBM Watsonx.knowledge

· Generates doc embeddings, defines a schema, and creates an index in Milvus for environment friendly retrieval.

· Inserts doc, their embeddings and metadata into a set in Milvus.

Step 7: Defining the instruments

With the retrieval system arrange, we now outline retriever as a software . This software can be utilized by the LLM to carry out context-based data retrieval

software = create_retriever_tool(retriever,”blog_post_retriever”,”Searches and returns excerpts from the Autonomous Brokers weblog submit.”,)instruments = [tool]

Step 8: Producing responses

Lastly, we will now generate responses to person queries, leveraging the retrieved content material.

from langgraph.prebuilt import create_react_agentfrom Langchain_core.messages import HumanMessage

agent_executor = create_react_agent(llm, instruments)

response = agent_executor.invoke({“messages”: [HumanMessage(content=”What is ReAct?”)]})raw_content = response[“messages”][1].content material

On this tutorial (hyperlink to code), now we have demonstrated easy methods to construct a pattern Agentic RAG system utilizing Langchain and IBM Watsonx. Agentic RAG methods mark a major development in AI, combining the generative energy of LLMs with the precision of refined retrieval methods. Their means to autonomously present contextually related and correct data makes them more and more helpful throughout numerous domains.

Because the demand for extra clever and interactive AI options continues to rise, mastering the mixing of LLMs with retrieval instruments can be important. This method not solely enhances the accuracy of AI responses but in addition creates a extra dynamic and user-centric interplay, paving the way in which for the subsequent technology of AI-powered functions.

NOTE: This content material is just not affiliated with or endorsed by IBM and is under no circumstances an official IBM documentation. It’s a private mission pursued out of non-public curiosity, and the knowledge is shared to learn the group.