Generative AI and Massive Language Fashions (LLMs) are sometimes used interchangeably, however whereas they share some similarities, they differ considerably in goal, structure, and capabilities.

On this article, I will break down the distinction between the 2, discover the broader implications of generative AI, and critically study the challenges and limitations of each applied sciences.

What’s Generative AI?

Generative AI refers to a category of AI techniques designed to create new content material, whether or not it is textual content, photographs, music, and even video, primarily based on patterns discovered from current information.

How Generative AI Works

At its core, Generative AI capabilities by studying patterns from huge quantities of knowledge, reminiscent of photographs, textual content, or sounds.

The method includes feeding the AI big datasets, permitting it to “perceive” these patterns deeply sufficient to recreate one thing comparable however totally authentic.

The “generative” side means the AI doesn’t simply acknowledge or classify data; it produces one thing new from scratch. Right here’s how:

1. Neural Networks

Generative AI makes use of neural networks, that are algorithms impressed by how the human mind works.

These networks include layers of synthetic neurons, every chargeable for processing information.

Neural networks will be educated to acknowledge patterns in information after which generate new information that follows these patterns.

2. Recurrent Neural Networks (RNNs)

For duties that contain sequences, like producing textual content or music, Recurrent Neural Networks (RNNs) are sometimes used.

RNNs are a kind of neural community designed to course of sequential information by preserving a type of “reminiscence” of what got here earlier than.

For instance, when producing a sentence, RNNs bear in mind the phrases that have been beforehand generated, permitting them to craft coherent sentences fairly than random strings of phrases.

3. Generative Adversarial Networks (GANs)

GANs work by pitting two neural networks in opposition to one another.

One community, the Generator, creates content material (like a picture), whereas the opposite community, the Discriminator, judges whether or not that content material appears actual or faux.

The Generator learns from the suggestions of the Discriminator, regularly bettering till it could possibly produce content material that’s indistinguishable from actual information.

This methodology is especially efficient in producing high-quality photographs and movies.

Examples of Generative AI

Picture mills :DALL-E: It might probably generate extremely detailed photographs from textual descriptions, demonstrating its capability to grasp and translate language into visible kind.Secure Diffusion: It permits customers to generate a variety of photographs, from life like portraits to fantastical landscapesMusic mills:Udio: This AI software can create authentic music compositions in numerous types, from classical to digital.Jukebox: One other notable music generator, Jukebox is able to producing realistic-sounding music in several genres and even imitating particular artists.Video instruments:Runway: This versatile platform affords a collection of instruments for video enhancing, animation, and technology. It may be used to create every part from easy animations to advanced visible results.Topaz Video AI: This software program focuses on enhancing and restoring video footage, utilizing AI to enhance high quality, cut back noise, and even enhance decision.

What Are Massive Language Fashions (LLMs)?

Massive Language Fashions (LLMs) are a specialised type of synthetic intelligence designed to grasp and generate human language with outstanding proficiency.

Not like basic generative AI, which might create a wide range of content material, LLMs focus particularly on processing and producing textual content, making them integral to duties like translation, summarization, and conversational AI.

How LLMs Work

At their core, LLMs leverage Pure Language Processing (NLP), a department of AI devoted to understanding and decoding human language. The method begins with tokenization:

Tokenization

This includes breaking down a sentence into smaller items, sometimes phrases or subwords. They’re known as tokens in LLM phrases.

As an illustration, the sentence “I like AI” is perhaps tokenized as [“I”, “love”, “AI”]. These tokens function the constructing blocks for the mannequin’s understanding.

What are Tokens in LLMs?

Let’s clear a few of LLM jargon and study extra about tokens.

Transformers

LLMs sometimes use an structure known as transformers, a mannequin that revolutionized pure language processing.

They work by analyzing relationships between phrases and their contexts in huge datasets.

In easy phrases, consider them as supercharged auto-complete capabilities able to writing essays, answering advanced questions, or summarizing articles.

Examples of LLM’s

Textual content Technology:GPT 3: One of the vital well-known LLMs. It’s able to producing human-like texts, from writing essays to creating poetry.GPT-4: It’s extra advance successor and additional improved like having reminiscence which permits it to keep up and entry data from earlier conversations.Gemini: A notable LLM by Google, which focuses on enhancing textual content technology and understanding.

Generative AI and LLMs: A singular Bond

Now that you just’re accustomed to the fundamentals of generative AI and enormous language fashions (LLMs), Let’s discover the transformative potential when these applied sciences are mixed.

Listed here are some concepts:

Content material Creation

To all the author people like me which may have met with a writers block, the mixture of LLMs and generative AI allows the creation of distinctive and contextually related content material throughout numerous media textual content, photographs, and even music.

Speak to your paperwork

An enchanting real-world use case is how companies and people can now scan paperwork and work together with them.

You might ask particular questions in regards to the content material, generate summaries, or request additional insights with out compromising privateness.

This strategy is especially useful in fields the place information confidentiality is essential, reminiscent of regulation, healthcare, or training.

We’ve lined one such venture known as PrivateGPT.

Enhanced Chatbots and Digital Assistants

Nobody likes the generic response of customer support chatbots. The mixture of LLMs and generative AI can energy superior chatbots that deal with advanced queries extra naturally.

As an illustration, an LLM may assist a digital assistant perceive a buyer’s wants, whereas generative AI crafts detailed and fascinating responses.

Open-source tasks like Rasa, a customizable chatbot framework, have made this expertise accessible for companies on the lookout for privateness and adaptability.

Improved Translation and Localization

When mixed, LLMs and generative AI can considerably enhance translation accuracy and cultural sensitivity.

For instance, an LLM may deal with the linguistic nuances of a language like Arabic, whereas generative AI produces culturally related photographs or content material for a similar viewers.

Open-source tasks like Marian NMT and Unlabel Tower, a translation toolkit and LLM, present promise on this space.

Nonetheless, challenges stay particularly in coping with idiomatic expressions or regional dialects, the place AI can stumble.

Challenges and Limitations

Each Generative AI and LLMs face vital challenges, lots of which elevate issues about their real-world purposes:

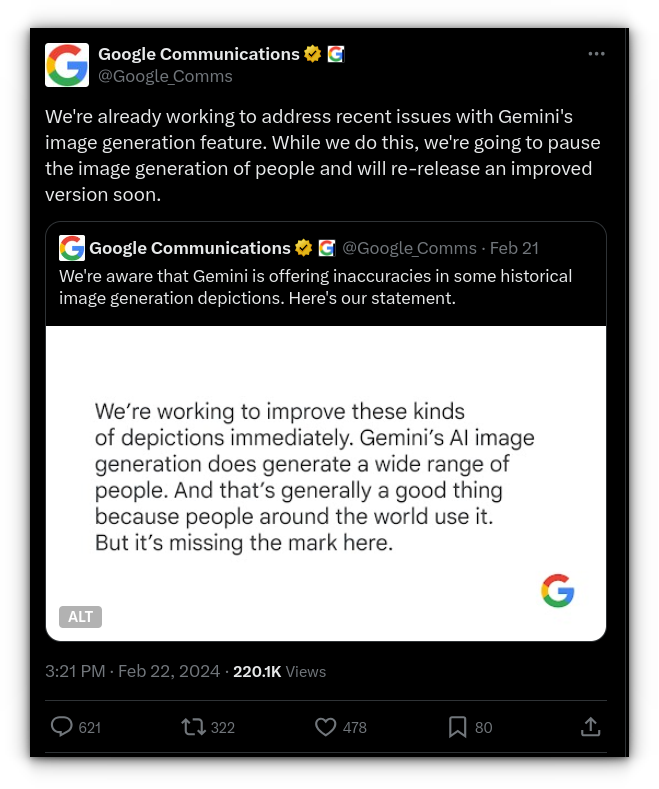

Bias

Generative AI and LLMs study from the info they’re educated on. If the coaching information comprises biases (e.g., discriminatory language or stereotypes), the AI will mirror these biases in its output.

This difficulty is particularly problematic for LLMs, which generate textual content primarily based on internet-sourced information, a lot of which comprises inherent biases.

Hallucinations

A singular drawback for LLMs is “hallucination,” the place the mannequin generates false or nonsensical data with unwarranted confidence.

Whereas generative AI may create one thing visually incoherent that’s straightforward to detect (like a distorted picture).

However an LLM may subtly current incorrect data in a means that seems totally believable, making it tougher to identify.

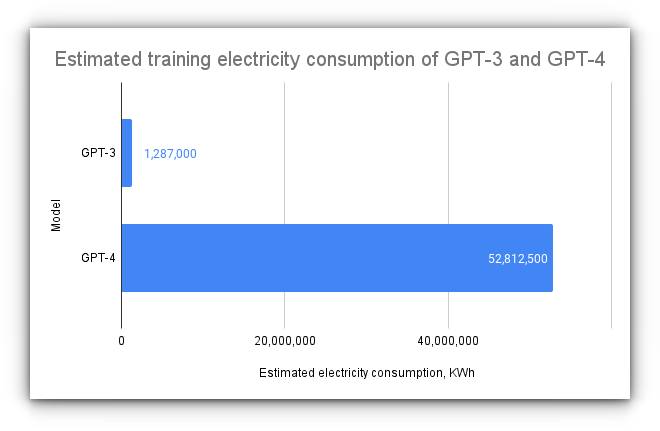

Useful resource Intensiveness

Coaching each generative AI and LLMs requires huge computational assets. It’s not nearly processing energy, but in addition storage and vitality.

This raises issues in regards to the environmental impression of large-scale AI coaching.

Moral Considerations

The power of generative AI to provide near-perfect imitations of photographs, voices, and even personalities poses moral questions.

How can we differentiate between AI-generated and human-made content material? With LLMs, the query turns into: how can we stop the unfold of misinformation or misuse of AI for malicious functions?

Key Takeaways

The best way generative AI and LLMs complement one another is mind-blowing whether or not it’s producing vivid imagery from easy textual content or creating human-like conversations, the chances appear limitless.

Nonetheless, one in every of my greatest issues is that corporations are coaching their fashions on person information with out express permission.

This follow raises severe privateness points, if every part we do on-line is being fed into AI, what’s left that’s really private or personal? It appears like we’re inching nearer to a world the place information possession turns into a relic of the previous.

![[SOLVED] ShareFile for Outlook Has Fired an Exception Error [SOLVED] ShareFile for Outlook Has Fired an Exception Error](https://mspoweruser.com/wp-content/uploads/2024/07/sharefile-for-outlook-has-fired-an-exception.png)