On this submit, we talk about how United Airways, in collaboration with the Amazon Machine Studying Options Lab, construct an energetic studying framework on AWS to automate the processing of passenger paperwork.

“As a way to ship the very best flying expertise for our passengers and make our inner enterprise course of as environment friendly as attainable, we now have developed an automatic machine learning-based doc processing pipeline in AWS. As a way to energy these functions, in addition to these utilizing different information modalities like laptop imaginative and prescient, we want a strong and environment friendly workflow to rapidly annotate information, practice and consider fashions, and iterate rapidly. Over the course a pair months, United partnered with the Amazon Machine Studying Options Labs to design and develop a reusable, use case-agnostic energetic studying workflow utilizing AWS CDK. This workflow will probably be foundational to our unstructured data-based machine studying functions as it can allow us to attenuate human labeling effort, ship robust mannequin efficiency rapidly, and adapt to information drift.”

– Jon Nelson, Senior Supervisor of Information Science and Machine Studying at United Airways.

Drawback

United’s Digital Know-how group is made up of worldwide various people working along with cutting-edge expertise to drive enterprise outcomes and preserve buyer satisfaction ranges excessive. They wished to make the most of machine studying (ML) methods similar to laptop imaginative and prescient (CV) and pure language processing (NLP) to automate doc processing pipelines. As a part of this technique, they developed an in-house passport evaluation mannequin to confirm passenger IDs. The method depends on handbook annotations to coach ML fashions, that are very expensive.

United wished to create a versatile, resilient, and cost-efficient ML framework for automating passport info verification, validating passenger’s identities and detecting attainable fraudulent paperwork. They engaged the ML Options Lab to assist obtain this aim, which permits United to proceed delivering world-class service within the face of future passenger progress.

Resolution overview

Our joint group designed and developed an energetic studying framework powered by the AWS Cloud Growth Equipment (AWS CDK), which programmatically configures and provisions all mandatory AWS providers. The framework makes use of Amazon SageMaker to course of unlabeled information, creates tender labels, launches handbook labeling jobs with Amazon SageMaker Floor Fact, and trains an arbitrary ML mannequin with the ensuing dataset. We used Amazon Textract to automate info extraction from particular doc fields similar to identify and passport quantity. On a excessive stage, the strategy might be described with the next diagram.

Information

The first dataset for this drawback is comprised of tens of hundreds of main-page passport pictures from which private info (identify, date of delivery, passport quantity, and so forth) should be extracted. Picture measurement, format, and construction differ relying on the doc issuing nation. We normalize these pictures right into a set of uniform thumbnails, which represent the purposeful enter for the energetic studying pipeline (auto-labeling and inference).

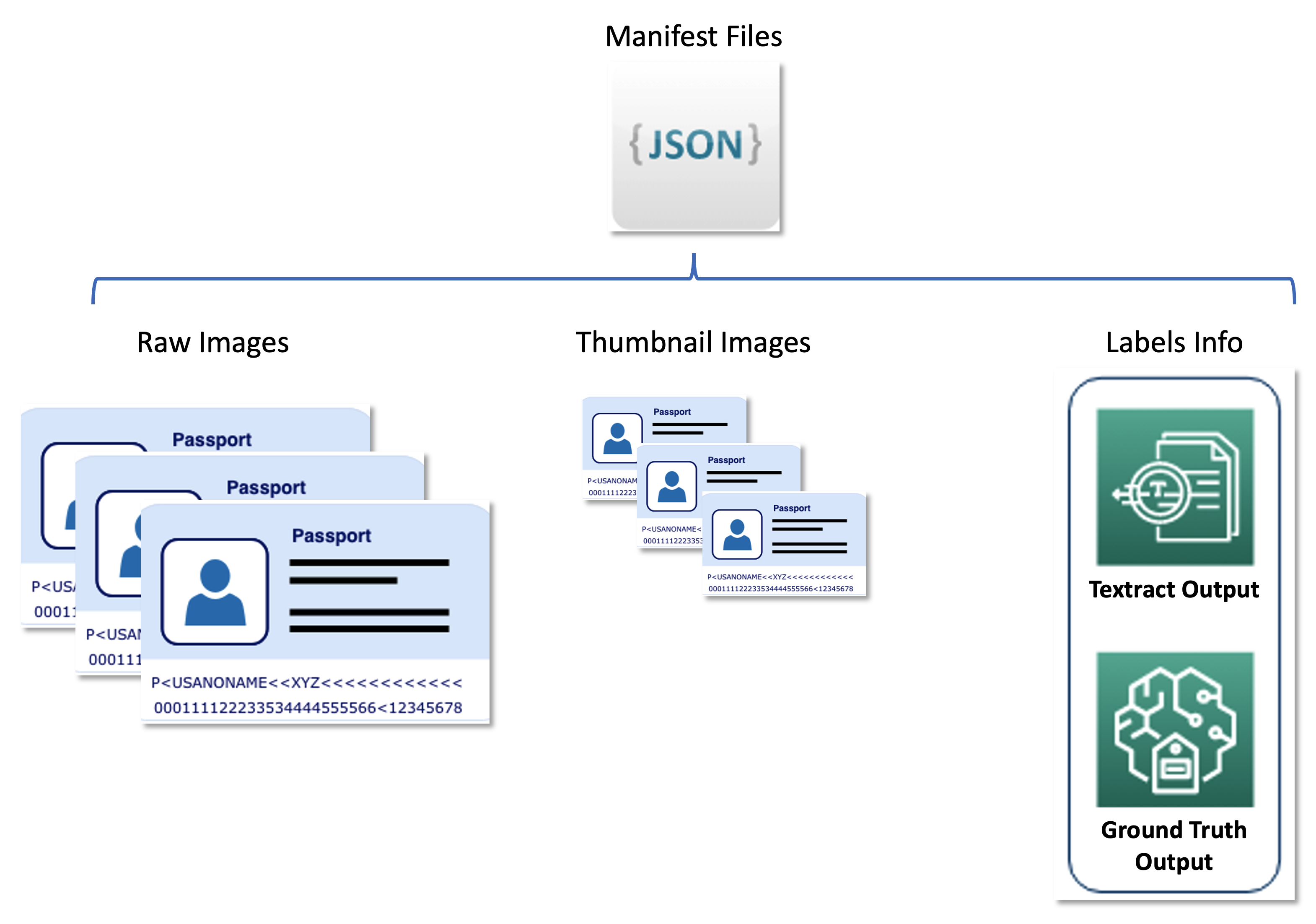

The second dataset comprises JSON line formatted manifest information that relate uncooked passport pictures, thumbnail pictures, and label info similar to tender labels and bounding field positions. Manifest information function a metadata set storing outcomes from varied AWS providers in a unified format, and decouple the energetic studying pipeline from downstream providers utilized by United. The next diagram illustrates this structure.

The next code is an instance manifest file:

Resolution parts

The answer consists of two most important parts:

An ML framework, which is chargeable for coaching the mannequin

An auto-labeling pipeline, which is chargeable for enhancing skilled mannequin accuracy in a cost-efficient method

The ML framework is chargeable for coaching the ML mannequin and deploying it as a SageMaker endpoint. The auto-labeling pipeline focuses on automating SageMaker Floor Fact jobs and sampling pictures for labeling by means of these jobs.

The 2 parts are decoupled from one another and solely work together by means of the set of labeled pictures produced by the auto-labeling pipeline. That’s, the labeling pipeline creates labels which might be later utilized by the ML framework to coach the ML mannequin.

ML framework

The ML Options Lab group constructed the ML framework utilizing the Hugging Face implementation of the state-of-art LayoutLMV2 mannequin (LayoutLMv2: Multi-modal Pre-training for Visually-Wealthy Doc Understanding, Yang Xu, et al.). Coaching was primarily based on Amazon Textract outputs, which served as a preprocessor and produced bounding containers round textual content of curiosity. The framework makes use of distributed coaching and runs on a customized Docker container primarily based on the SageMaker pre-built Hugging Face picture with extra dependencies (dependencies which might be lacking within the pre-built SageMaker Docker picture however required for Hugging Face LayoutLMv2).

The ML mannequin was skilled to categorise doc fields within the following 11 lessons:

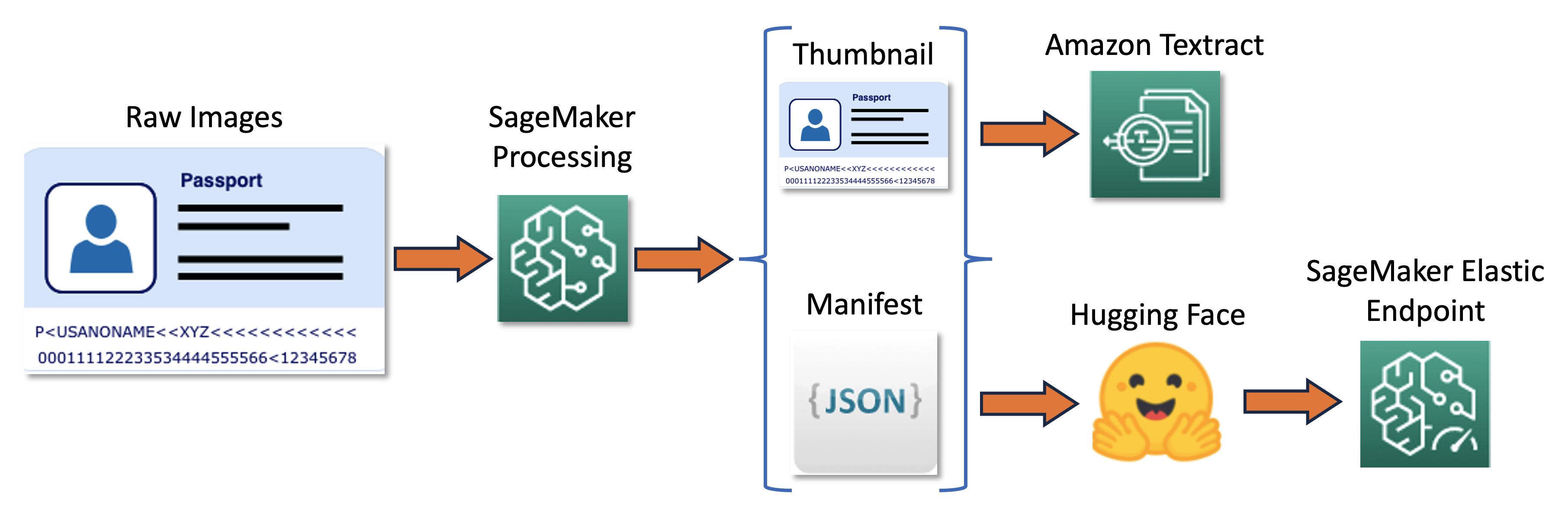

The coaching pipeline might be summarized within the following diagram.

First, we resize and normalize a batch of uncooked pictures into thumbnails. On the identical time, a JSON line manifest file with one line per picture is created with details about uncooked and thumbnail pictures from the batch. Subsequent, we use Amazon Textract to extract textual content bounding containers within the thumbnail pictures. All info produced by Amazon Textract is recorded in the identical manifest file. Lastly, we use the thumbnail pictures and manifest information to coach a mannequin, which is later deployed as a SageMaker endpoint.

Auto-labeling pipeline

We developed an auto-labeling pipeline designed to carry out the next features:

Run periodic batch inference on an unlabeled dataset.

Filter outcomes primarily based on a particular uncertainty sampling technique.

Set off a SageMaker Floor Fact job to label the sampled pictures utilizing a human workforce.

Add newly labeled pictures to the coaching dataset for subsequent mannequin refinement.

The uncertainty sampling technique reduces the variety of pictures despatched to the human labeling job by choosing pictures that might possible contribute probably the most to enhancing mannequin accuracy. As a result of human labeling is an costly process, such sampling is a vital price discount approach. We help 4 sampling methods, which might be chosen as a parameter saved in Parameter Retailer, a functionality of AWS Methods Supervisor:

Least confidence

Margin confidence

Ratio of confidence

Entropy

The whole auto-labeling workflow was carried out with AWS Step Features, which orchestrates the processing job (referred to as the elastic endpoint for batch inference), uncertainty sampling, and SageMaker Floor Fact. The next diagram illustrates the Step Features workflow.

Value-efficiency

The principle issue influencing labeling prices is handbook annotation. Earlier than deploying this resolution, the United group had to make use of a rule-based strategy, which required costly handbook information annotation and third-party parsing OCR methods. With our resolution, United decreased their handbook labeling workload by manually labeling solely pictures that might outcome within the largest mannequin enhancements. As a result of the framework is model-agnostic, it may be utilized in different comparable situations, extending its worth past passport pictures to a wider set of paperwork.

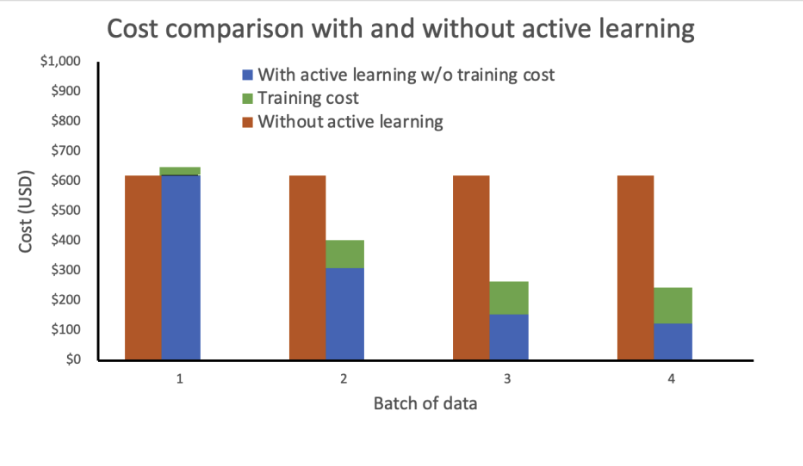

We carried out a value evaluation primarily based on the next assumptions:

Every batch comprises 1,000 pictures

Coaching is carried out utilizing an mlg4dn.16xlarge occasion

Inference is carried out on an mlg4dn.xlarge occasion

Coaching is finished after every batch with 10% of annotated labels

Every spherical of coaching leads to the next accuracy enhancements:

50% after the primary batch

25% after the second batch

10% after the third batch

Our evaluation reveals that coaching price stays fixed and excessive with out energetic studying. Incorporating energetic studying leads to exponentially lowering prices with every new batch of knowledge.

We additional decreased prices by deploying the inference endpoint as an elastic endpoint by including an auto scaling coverage. The endpoint assets can scale up or down between zero and a configured most variety of situations.

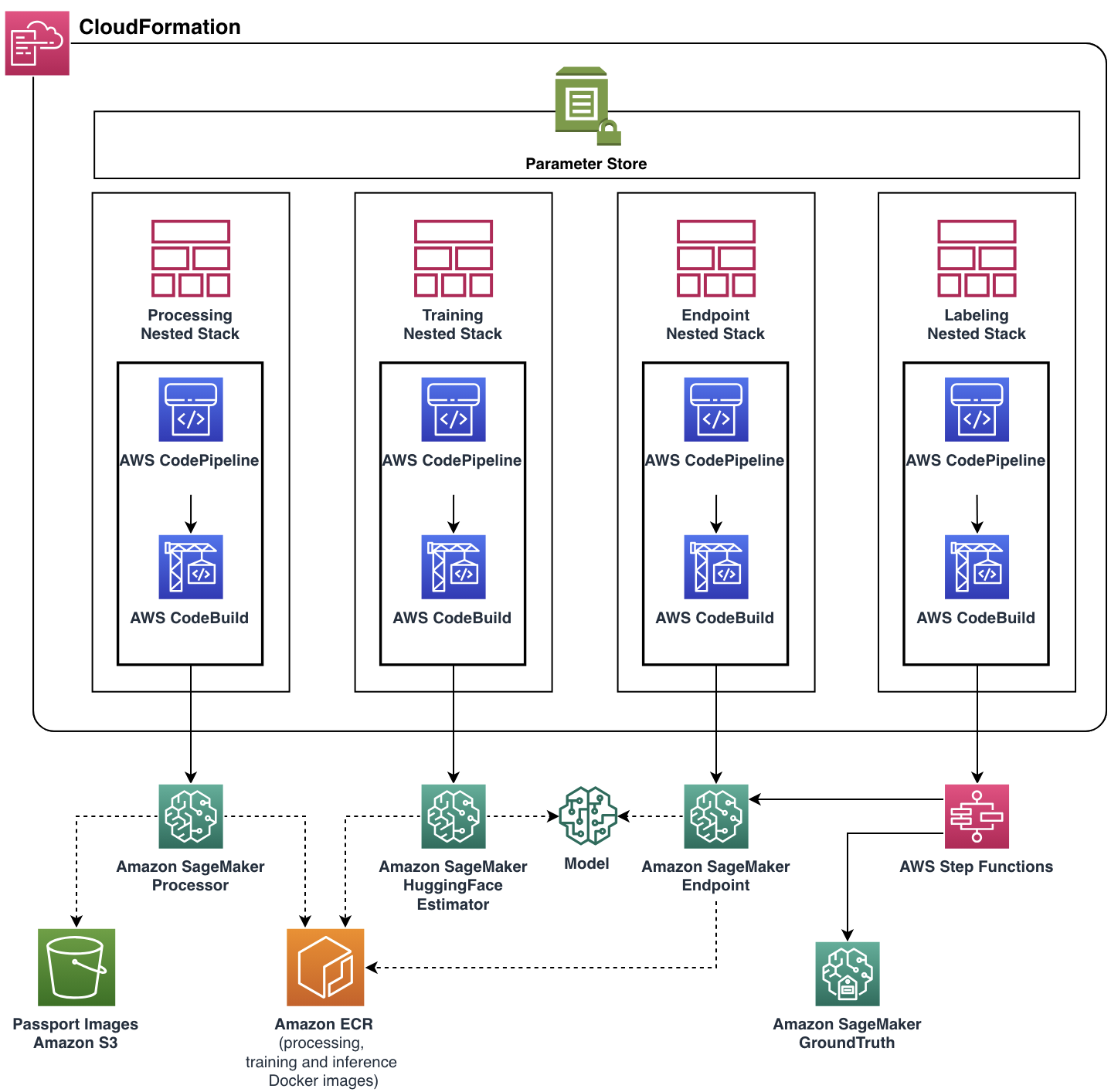

Last resolution structure

Our focus was to assist the United group meet their purposeful necessities whereas constructing a scalable and versatile cloud utility. The ML Options Lab group developed the entire production-ready resolution with assist of AWS CDK, automating administration and provisioning of all cloud assets and providers. The ultimate cloud utility was deployed as a single AWS CloudFormation stack with 4 nested stacks, every represented a single purposeful part.

Nearly each pipeline characteristic, together with Docker pictures, endpoint auto scaling coverage, and extra, was parameterized by means of Parameter Retailer. With such flexibility, the identical pipeline occasion could possibly be run with a broad vary of settings, including the flexibility to experiment.

Conclusion

On this submit, we mentioned how United Airways, in collaboration with the ML Options Lab, constructed an energetic studying framework on AWS to automate the processing of passenger paperwork. The answer had nice impression on two essential features of United’s automation objectives:

Reusability – Because of the modular design and model-agnostic implementation, United Airways can reuse this resolution on virtually another auto-labeling ML use case

Recurring price discount – By intelligently combining handbook and auto-labeling processes, the United group can scale back common labeling prices and change costly third-party labeling providers

If you’re occupied with implementing an analogous resolution or need to be taught extra concerning the ML Options Lab, contact your account supervisor or go to us at Amazon Machine Studying Options Lab.

In regards to the Authors

Xin Gu is the Lead Information Scientist – Machine Studying at United Airways’ Superior Analytics and Innovation division. She contributed considerably to designing machine-learning-assisted doc understanding automation and performed a key position in increasing information annotation energetic studying workflows throughout various duties and fashions. Her experience lies in elevating AI efficacy and effectivity, attaining outstanding progress within the subject of clever technological developments at United Airways.

Xin Gu is the Lead Information Scientist – Machine Studying at United Airways’ Superior Analytics and Innovation division. She contributed considerably to designing machine-learning-assisted doc understanding automation and performed a key position in increasing information annotation energetic studying workflows throughout various duties and fashions. Her experience lies in elevating AI efficacy and effectivity, attaining outstanding progress within the subject of clever technological developments at United Airways.

Jon Nelson is the Senior Supervisor of Information Science and Machine Studying at United Airways.

Jon Nelson is the Senior Supervisor of Information Science and Machine Studying at United Airways.

Alex Goryainov is Machine Studying Engineer at Amazon AWS. He builds structure and implements core parts of energetic studying and auto-labeling pipeline powered by AWS CDK. Alex is an professional in MLOps, cloud computing structure, statistical information evaluation and enormous scale information processing.

Alex Goryainov is Machine Studying Engineer at Amazon AWS. He builds structure and implements core parts of energetic studying and auto-labeling pipeline powered by AWS CDK. Alex is an professional in MLOps, cloud computing structure, statistical information evaluation and enormous scale information processing.

Vishal Das is an Utilized Scientist on the Amazon ML Options Lab. Previous to MLSL, Vishal was a Options Architect, Power, AWS. He acquired his PhD in Geophysics with a PhD minor in Statistics from Stanford College. He’s dedicated to working with prospects in serving to them assume huge and ship enterprise outcomes. He’s an professional in machine studying and its utility in fixing enterprise issues.

Vishal Das is an Utilized Scientist on the Amazon ML Options Lab. Previous to MLSL, Vishal was a Options Architect, Power, AWS. He acquired his PhD in Geophysics with a PhD minor in Statistics from Stanford College. He’s dedicated to working with prospects in serving to them assume huge and ship enterprise outcomes. He’s an professional in machine studying and its utility in fixing enterprise issues.

Tianyi Mao is an Utilized Scientist at AWS primarily based out of Chicago space. He has 5+ years of expertise in constructing machine studying and deep studying options and focuses on laptop imaginative and prescient and reinforcement studying with human feedbacks. He enjoys working with prospects to grasp their challenges and resolve them by creating modern options utilizing AWS providers.

Tianyi Mao is an Utilized Scientist at AWS primarily based out of Chicago space. He has 5+ years of expertise in constructing machine studying and deep studying options and focuses on laptop imaginative and prescient and reinforcement studying with human feedbacks. He enjoys working with prospects to grasp their challenges and resolve them by creating modern options utilizing AWS providers.

Yunzhi Shi is an Utilized Scientist on the Amazon ML Options Lab, the place he works with prospects throughout completely different business verticals to assist them ideate, develop, and deploy AI/ML options constructed on AWS Cloud providers to unravel their enterprise challenges. He has labored with prospects in automotive, geospatial, transportation, and manufacturing. Yunzhi obtained his Ph.D. in Geophysics from The College of Texas at Austin.

Yunzhi Shi is an Utilized Scientist on the Amazon ML Options Lab, the place he works with prospects throughout completely different business verticals to assist them ideate, develop, and deploy AI/ML options constructed on AWS Cloud providers to unravel their enterprise challenges. He has labored with prospects in automotive, geospatial, transportation, and manufacturing. Yunzhi obtained his Ph.D. in Geophysics from The College of Texas at Austin.

Diego Socolinsky is a Senior Utilized Science Supervisor with the AWS Generative AI Innovation Middle, the place he leads the supply group for the Japanese US and Latin America areas. He has over twenty years of expertise in machine studying and laptop imaginative and prescient, and holds a PhD diploma in arithmetic from The Johns Hopkins College.

Diego Socolinsky is a Senior Utilized Science Supervisor with the AWS Generative AI Innovation Middle, the place he leads the supply group for the Japanese US and Latin America areas. He has over twenty years of expertise in machine studying and laptop imaginative and prescient, and holds a PhD diploma in arithmetic from The Johns Hopkins College.

Xin Chen is at the moment the Head of Folks Science Options Lab at Amazon Folks eXperience Know-how (PXT, aka HR) Central Science. He leads a group of utilized scientists to construct manufacturing grade science options to proactively determine and launch mechanisms and course of enhancements. Beforehand, he was head of Central US, Higher China Area, LATAM and Automotive Vertical in AWS Machine Studying Options Lab. He helped AWS prospects determine and construct machine studying options to deal with their group’s highest return-on-investment machine studying alternatives. Xin is adjunct school at Northwestern College and Illinois Institute of Know-how. He obtained his PhD in Pc Science and Engineering on the College of Notre Dame.

Xin Chen is at the moment the Head of Folks Science Options Lab at Amazon Folks eXperience Know-how (PXT, aka HR) Central Science. He leads a group of utilized scientists to construct manufacturing grade science options to proactively determine and launch mechanisms and course of enhancements. Beforehand, he was head of Central US, Higher China Area, LATAM and Automotive Vertical in AWS Machine Studying Options Lab. He helped AWS prospects determine and construct machine studying options to deal with their group’s highest return-on-investment machine studying alternatives. Xin is adjunct school at Northwestern College and Illinois Institute of Know-how. He obtained his PhD in Pc Science and Engineering on the College of Notre Dame.

/cdn.vox-cdn.com/uploads/chorus_asset/file/25661290/Screenshot_2024_10_06_at_10.48.36_AM.png)