ControlNet is a neural community that may enhance picture technology in Secure Diffusion by including further situations. This enables customers to have extra management over the photographs generated. As a substitute of attempting out totally different prompts, the ControlNet fashions allow customers to generate constant photos with only one immediate.

On this submit, you’ll discover ways to achieve exact management over photos generated by Secure Diffusion utilizing ControlNet. Particularly, we’ll cowl:

What’s ControlNet, and the way it works

Easy methods to use ControlNet with the Hugging Face Areas

Utilizing ControlNet with the Secure Diffusion WebUI

Let’s get began.

Utilizing ControlNet with Secure DiffusionPhoto by Nadine Shaabana. Some rights reserved.

Overview

This submit is in 4 components; they’re:

What’s ControlNet?

ControlNet in Hugging Face House

Scribble Interactive

ControlNet in Secure Diffusion Net UI

What’s ControlNet?

ControlNet is a neural community structure that can be utilized to manage diffusion fashions. Along with the immediate you’d normally present to create the output picture, it really works by including further conditioning to the diffusion mannequin with an enter picture as the extra constraint to information the diffusion course of.

There are a lot of kinds of conditioning inputs (canny edge, person sketching, human pose, depth, and so on.) that may present a diffusion mannequin to have extra management over picture technology.

Some examples of how ControlNet can management diffusion fashions:

By offering a particular human pose, a picture mimicking the identical pose is generated.

Make the output observe the type from one other picture.

Flip scribbles into high-quality photos.

Generate an identical picture utilizing a reference picture.

Inpainting lacking components of a picture.

Block diagram of how ControlNet modified the diffusion course of. Determine from Zhang et al (2023)

ControlNet works by copying the weights from the unique diffusion mannequin into two units:

A “locked” set that preserves the unique mannequin

A “trainable” set that learns the brand new conditioning.

The ControlNet mannequin basically produces a distinction vector within the latent area, which modifies the picture that the diffusion mannequin would in any other case produce. In equation, if the unique mannequin produces output picture $y$ from immediate $x$ utilizing a operate $y=F(x;Theta)$, within the case of ControlNet can be

$$y_c = F(x;Theta) + Z(F(x+Z(c;Theta_{z1}); Theta_c); Theta_{z2})$$

wherein the operate $Z(cdot;Theta_z)$ is the zero convolution layer, and the parameters $Theta_c, Theta_{z1}, Theta_{z2}$ are parameters from the ControlNet mannequin. The zero-convolution layers have weights and biases initialized with zero, so that they don’t initially trigger distortion. As coaching occurs, these layers be taught to fulfill the conditioning constraints. This construction permits coaching ControlNet even on small machines. Word that the identical diffusion structure (e.g., Secure Diffusion 1.x) is used twice however with totally different mannequin parameters $Theta$ and $Theta_c$. And now, you might want to present two inputs, $x$ and $c$ to create the output $y$.

The design of working ControlNet and the unique Diffusion mannequin in segregate permits fine-tuning on small datasets with out destroying the unique diffusion mannequin. It additionally permits the identical ControlNet for use with totally different diffusion fashions so long as the structure is appropriate. The modular and fast-adapting nature of ControlNet makes it a flexible strategy for gaining extra exact management over picture technology with out intensive retraining.

ControlNet in Hugging Face House

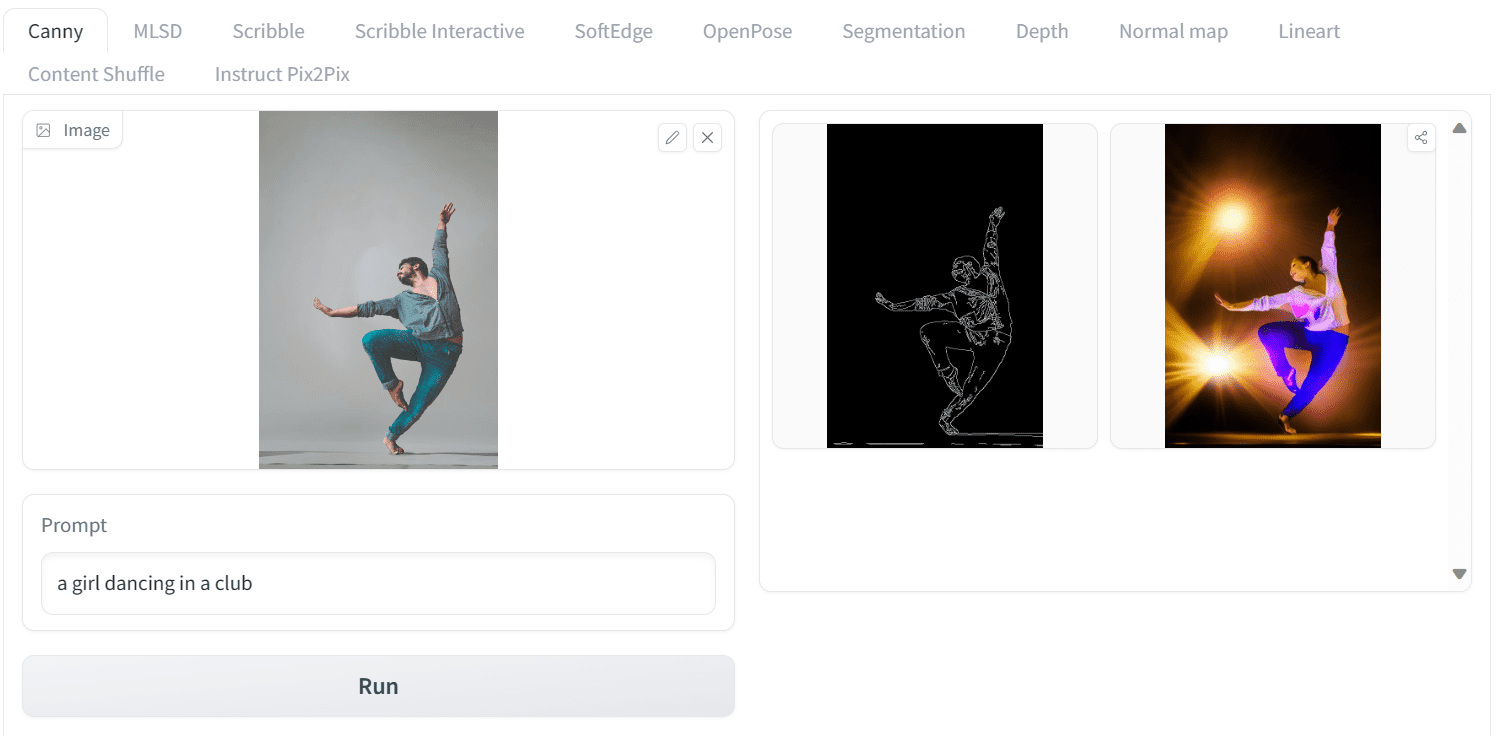

Let’s see how ControlNet do magic to the diffusion mannequin. On this part, we’ll use an internet ControlNet demo accessible on Hugging Face Areas to generate a human pose picture utilizing the ControlNet Canny mannequin.

We’ll add Yogendra Singh’s picture from Pexels.com to ControlNet Areas and add a easy immediate. As a substitute of a boy, we’ll generate a picture of ladies dancing in a membership. Let’s use the tab “Canny”. Set the immediate to:

a lady dancing in a membership

Click on run, and you will notice the output as follows:

Operating ControlNet on Hugging Face House

That is superb! “Canny” is a picture processing algorithm to detect edges. Therefore, you present the sting out of your uploaded picture as a top level view sketch. Then, present this as the extra enter $c$ to ControlNet, collectively along with your textual content immediate $x$, you offered the output picture $y$. In essence, you may generate an identical pose picture utilizing canny edges on the unique picture.

Let’s see one other instance. We’ll add Gleb Krasnoborov’s picture and apply a brand new immediate that modifications the background, impact, and ethnicity of the boxer to Asian. The immediate we use is

A person shadow boxing in streets of Tokyo

and that is the output:

One other instance of utilizing Canny mannequin in ControlNet

As soon as once more, the outcomes are glorious. We generated a picture of a boxer in an identical pose, shadowboxing on the streets of Tokyo.

Scribble Interactive

The structure of ControlNet can settle for many alternative sorts of enter. Utilizing Canny edge because the define is only one mannequin of ControlNet. There are a lot of extra fashions, every educated as a unique conditioning for picture diffusion.

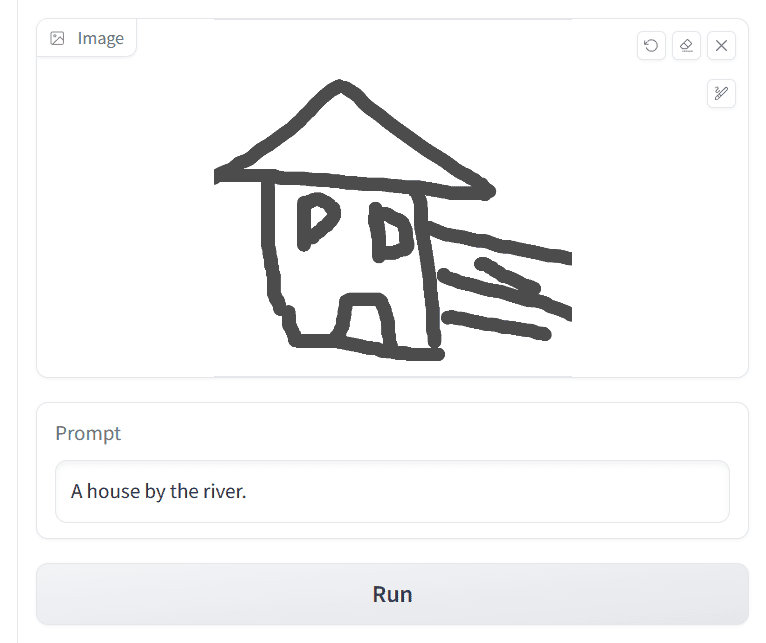

On the identical Hugging Face Areas web page, the totally different variations of ControlNet variations can be found, which could be accessed by way of the highest tab. Let’s see one other instance utilizing the Scribbles mannequin. As a way to generate a picture utilizing Scribbles, merely go to the Scribble Interactive tab draw a doodle along with your mouse, and write a easy immediate to generate the picture, akin to

A home by the river

Like the next:

Utilizing Scribble ControlNet: Drawing a home and offering a textual content immediate

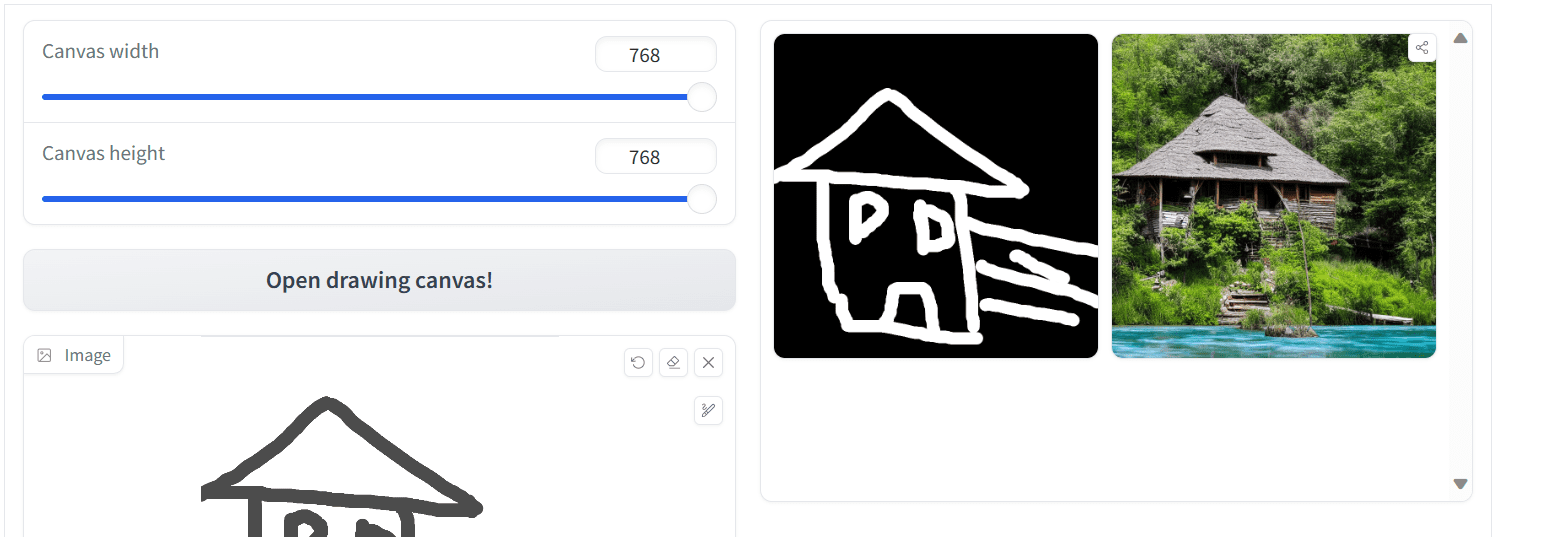

Then, by setting the opposite parameters and urgent the “Run” button, it’s possible you’ll get the output like the next:

Output from Scribble ControlNet

The generated picture appears good however may very well be higher. You may strive once more with extra particulars within the scribbles in addition to the textual content immediate to get an improved outcome.

Utilizing scribble and a textual content immediate is a trivial technique to generate photos, particularly when you may’t consider a really correct textual description of the picture you wish to create. Beneath is one other instance of making an image of a scorching air balloon.

Creating an image of a scorching air balloon utilizing Scribble.

ControlNet in Secure Diffusion Net UI

As you’ve got discovered about utilizing the Secure Diffusion Net UI within the earlier posts, you may anticipate that ControlNet can be used on the Net UI. It’s an extension. In the event you haven’t put in it but, you might want to launch the Secure Diffusion Net UI. Then, go to the Extensions tab, click on on “Set up from the URL”, and enter the hyperlink to the ControlNet repository:

https://github.com/Mikubill/sd-webui-controlnet to put in.

Putting in ControlNet extension on Secure Diffusion Net UI

The extension you put in is barely the code. Earlier than utilizing the ControlNet Canny model, for instance, it’s a must to obtain and arrange the Canny mannequin.

Go to https://hf.co/lllyasviel/ControlNet-v1-1/tree/most important

Obtain the control_v11p_sd15_canny.pth

Put the mannequin file within the the SD WebUI listing in stable-diffusion-webui/extensions/sd-webui-controlnet/fashions or stable-diffusion-webui/fashions/ControlNet

Word: You may obtain all fashions (beware every mannequin is in a number of GB in dimension) from the above repository utilizing git clone command. In addition to, this repository collects some extra ControlNet fashions, https://hf.co/lllyasviel/sd_control_collection

Now, you might be all arrange to make use of the mannequin.

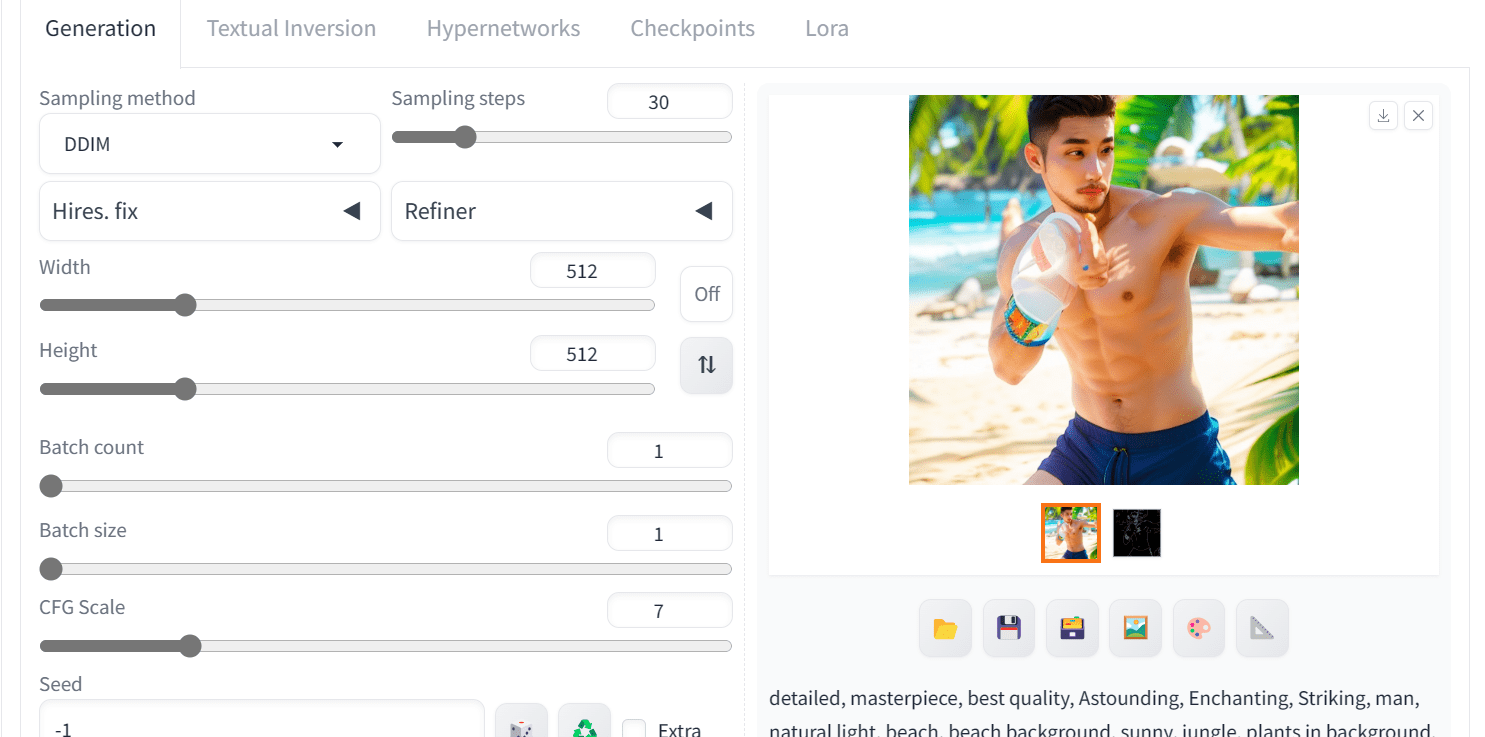

Let’s strive it out with the Canny ControlNet. You go to the “txt2img” tab, scroll down to search out the ControNet part to open it. Then, you observe these steps:

Change the management kind to Canny.

Choosing “Canny” from the ControlNet field in Net UI.

Add the reference picture.

Add a picture to ControlNet widget in Net UI

Work on different sections on the txt2img tab: Write constructive immediate, unfavourable immediate, and alter different superior settings. For instance,

Optimistic immediate: “detailed, masterpiece, highest quality, Astounding, Enchanting, Putting, man, pure gentle, seashore, seashore background, sunny, jungle, vegetation in background, seashore background, seashore, tropical seashore, water, clear pores and skin, good gentle, good shadows”

Unfavourable immediate: “worst high quality, low high quality, lowres, monochrome, greyscale, a number of views, comedian, sketch, dangerous anatomy, deformed, disfigured, watermark, multiple_views, mutation arms, watermark”

and the technology parameters:

Sampling Steps: 30

Sampler: DDIM

CFG scale: 7

The output may very well be:

Output of picture technology utilizing ControlNet in Net UI

As you may see, now we have obtained high-quality and related photos. We are able to enhance the picture by utilizing totally different ControlNet fashions and making use of varied immediate engineering strategies, however that is the most effective now we have now.

Right here is the complete picture generated with the Canny model of ControlNet.

Picture generated utilizing ControlNet with picture diffusion mannequin

Additional Readings

This part offers extra assets on the subject if you’re trying to go deeper.

Abstract

On this submit, we discovered about ControlNet, the way it works, and the way to use it to generate exact management photos of customers’ selections. Particularly, we coated:

ControlNet on-line demo on Hugging Face to generate photos utilizing varied reference photos.

Completely different variations of ControlNet and generated the picture utilizing the scribbles.

Organising ControlNet on Secure Diffusion WebUI and utilizing it to generate the high-quality picture of the boxer.