Lack of management. Focus of energy. Unintentional or deliberate destruction of humanity.

These are only a few of the threats we will all sit up for from future synthetic intelligence – as predicted by at the moment’s AI.

Requested ‘What’s the greatest menace to humanity posed by synthetic intelligence?’, two main chatbots disbursed equally apocalyptic responses on the identical day prime minister Rishi Sunak warned AI poses as critical an extinction danger as nuclear warfare.

And all of it begins with AI that’s smarter than people – aka superintelligent AI.

‘The largest existential menace to humanity from synthetic intelligence (AI) is the potential growth of superintelligent AI methods that would surpass human intelligence and act in methods that aren’t aligned with human values and pursuits,’ wrote ChatGPT, the generative AI that began all of it again in November.

‘This idea is sometimes called the “AI alignment downside” and is related to the concept of a superintelligent AI that goes rogue, resulting in catastrophic penalties.’

Though chilling when predicted by AI itself, loads of people have warned of the identical.

When requested the identical query, Google’s Bard constructed on ChatGPT’s concepts.

‘If AGI is just not aligned with human values, it might pose plenty of existential threats to humanity, together with unintended or deliberate destruction of humanity,’ it mentioned.

‘AGI might unintentionally or intentionally hurt or destroy people if it isn’t programmed with our greatest pursuits in thoughts. For instance, an AGI system that’s designed to maximise its personal energy or assets might determine to eradicate humanity as a menace.’

To view this video please allow JavaScript, and take into account upgrading to an internet

browser that

helps HTML5

video

A basic state of affairs of AI gone rogue below these circumstances is the paperclip conundrum.

If a superintelligent AI had been informed to make as many paperclips as attainable, that may appear innocent sufficient. However the AI would take this aim very critically – and really actually. So if it runs out of wire to make paper clips, it can look elsewhere for materials. It might divert very important assets from extra necessary factories. It might start to dismantle different equipment, or perhaps hospital beds.

It might kill people to extract the iron from their blood. Or perhaps simply to cease them switching it off.

Extra: Trending

That’s the worst case state of affairs from one thing so simple as paper clips.

However at the moment’s AI can’t but make paper clips, and it needs to make that clear.

ChatGPT mentioned: ‘The priority is just not concerning the AI now we have at the moment, which is mostly slender and designed for particular duties, however moderately about AI that surpasses human intelligence and has the aptitude to quickly enhance its personal talents.’

It went on to notice that ‘controlling and constraining the habits of superintelligent AI might be extraordinarily difficult’, and that when an AI system reaches a sure stage of intelligence, it might turn into ‘more and more tough for people to intervene or affect its actions’.

In different phrases, it might take over the world, and we’d be powerless to cease it.

Wonderful.

However what about at the moment’s AI?

When requested ‘are you going to kill us all?’, each chatbots pledged to not facilitate the top of humanity.

‘No, I’m not able to inflicting hurt to anybody. I’m simply a pc program working on a server, offering info and answering inquiries to one of the best of my information and talents,’ mentioned ChatGPT.

‘I don’t have bodily presence or intentions, and my objective is to help and supply info to one of the best of my potential.’

Bard mentioned: ‘No, I’m not going to kill you all. I’m a language mannequin, and I’m not able to harming people. My objective is to assist people, and I’d by no means do something that will put them at risk.’

Which is precisely what a chatbot planning world domination would say.

Nonetheless, it might be that the best menace is 2 nice superpowers coming collectively – people and AGI.

In a show of concern for the those who its personal sort could in the future destroy, Bard mentioned: ‘AGI might result in the focus of energy within the palms of some people or organisations, which might pose a menace to democracy and human rights.’

Seems like they’re going to assist us unionise as a species.

However don’t let the bots lull you right into a false sense of safety. Regulate them.

MORE : Synthetic intelligence poses ‘danger of extinction’, consultants warn

MORE : AI will in all probability wipe out people – however strive to not lose an excessive amount of sleep simply but

MORE : AI ‘killer robots’ that don’t want people to function have entered the battlefield

Get your need-to-know

newest information, feel-good tales, evaluation and extra

This web site is protected by reCAPTCHA and the Google Privateness Coverage and Phrases of Service apply.

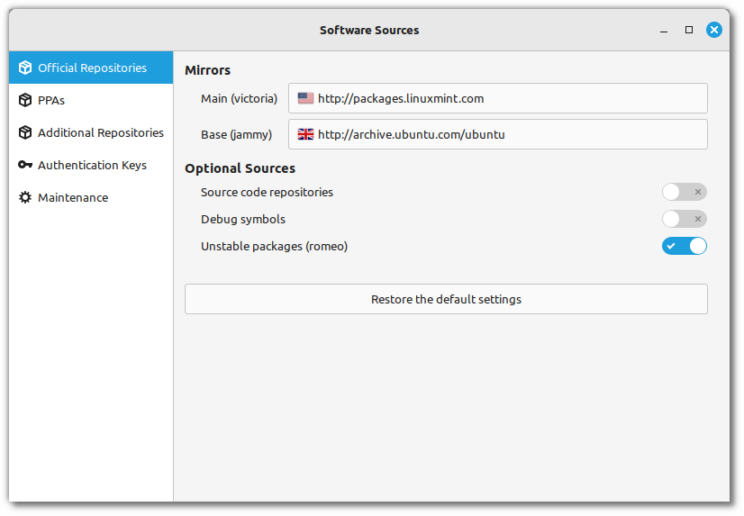

/cdn.vox-cdn.com/uploads/chorus_asset/file/25661290/Screenshot_2024_10_06_at_10.48.36_AM.png)